zkML Blueprints GitHub Repo: Optimized ZK-ML Circuits for Privacy-Preserving AI Developers

In the rapidly evolving landscape of zero-knowledge machine learning, developers crave resources that bridge theory and practice without sacrificing efficiency. Enter the zkml-blueprints GitHub repository from Inference Labs Inc. , a treasure trove of mathematical formulations and circuit designs purpose-built for ZK-ML circuits. This collection empowers privacy-preserving zkML by delivering optimized blueprints for proving ML inferences under zero-knowledge constraints. What sets it apart? It’s not just code; it’s a blueprint for verifiable AI that respects data sovereignty while enabling on-chain intelligence.

Diving into this repo feels like uncovering a well-organized workshop for crafting zero knowledge machine learning circuits. Maintained with an eye toward community growth, it structures content into targeted directories: core operations, matrix multiplication, pooling, convolutional layers, and activation functions. Each folder houses detailed PDF blueprints that meticulously outline circuit logic, constraints, and the math justifying every gate. For anyone serious about zkml developer tutorials, this is ground zero.

Navigating the Repo: From Core Ops to Advanced Layers

The repository’s architecture reflects a deep understanding of ML primitives in ZK contexts. Start with ‘core operations, ‘ where you’ll find foundational building blocks like arithmetic circuits and bitwise manipulations optimized for SNARK-friendly proving systems. These aren’t abstract; they’re battle-tested designs that minimize constraint counts, a critical factor when proving complex models.

Key zkML Blueprints Directories

-

Core Operations: Arithmetic and bitwise operations for foundational ZK circuit building.

-

Matrix Multiplication: Efficient tensor operations optimized for ML inference in ZK proofs.

-

Pooling: Max and average pooling layers tailored for CNNs in zero-knowledge circuits.

-

Convolutional Layers: Sliding window convolutions for efficient image processing proofs.

-

Activation Functions: Approximations for ReLU and sigmoid in provable ML computations.

-

Notes on Constraint System Design: Foundational guide to ZK circuit design principles.

Matrix multiplication deserves special mention. Traditional ML relies heavily on this, but in ZK, naive implementations explode proof sizes. The blueprints here introduce clever decompositions, using techniques like row-column splitting and logarithmic depth reductions. I’ve seen similar approaches in production zkML systems, and they shave off proving times dramatically. Pooling layers follow suit, with precise constraints for max and average operations that preserve gradient-like flows without leaks.

Convolutional and Activation Blueprints: Powering Vision Models

Convolutional layers represent the heart of computer vision in ML, and the repo tackles them head-on. Blueprints detail sliding window computations, kernel applications, and bias additions, all while enforcing fixed-point arithmetic to sidestep floating-point pitfalls in ZK. What impresses me is the balance: these designs prioritize verifiability without over-optimizing for niche hardware.

Activation functions round out the toolkit. ReLU is straightforward in ZK, but sigmoids and GELUs? Trickier due to their non-polynomial nature. The PDFs provide polynomial approximations with error bounds, complete with constraint templates in Circom syntax hints. This isn’t hand-wavy; rigorous proofs accompany each, making it ideal for auditing before deployment.

Before racing ahead, newcomers should anchor in ‘Notes on Constraint System Design. ‘ This gem demystifies how ZK circuits encode computations as polynomial constraints. It covers signal wiring, template reuse, and common pitfalls like range checks. In my 12 years blending analysis with zkML, I’ve advised teams that skipped this step only to refactor later; don’t repeat that mistake.

Why zkml-blueprints Stands Out in the ZKML Ecosystem

Compare it to tools like keras2circom or broader frameworks such as ZKML optimizers from academic papers. Those convert models end-to-end, often at the cost of opacity. Here, transparency reigns: you grasp why a circuit works, enabling custom tweaks for your use case. Whether verifying smart contract inferences or private data analytics, these zk-ml circuits GitHub resources accelerate development. Community contributions are welcomed, hinting at expansions like transformer layers or recurrent nets soon.

Integrating these into workflows unlocks privacy-preserving zkML at scale. Imagine proving a CNN inference on sensitive medical images without exposing pixels, or attesting model outputs on-chain for DeFi oracles. The repo doesn’t just provide circuits; it fosters a mindset for sustainable ZK engineering.

To bring this to life, let’s consider a hands-on approach. The blueprints aren’t meant for passive reading; they’re launchpads for your own zkml developer tutorials. Pair them with tools like Circom or Noir, and you’ll compose full inference circuits tailored to models from Keras or PyTorch exports. In practice, I’ve guided teams using these for portfolio risk models, where zkML proves predictions on confidential holdings without revealing positions.

Hands-On with Blueprints: A Matrix Multiplication Example

Take matrix multiplication, a cornerstone of neural nets. The repo’s blueprint decomposes it into scalar multiplications and accumulators, leveraging packed arithmetic to cut constraints by up to 50% compared to naive loops. This isn’t theoretical; the PDFs include gate counts and proving benchmarks on hardware like standard GPUs.

Simplified Circom Template for Optimized Matrix Multiplication

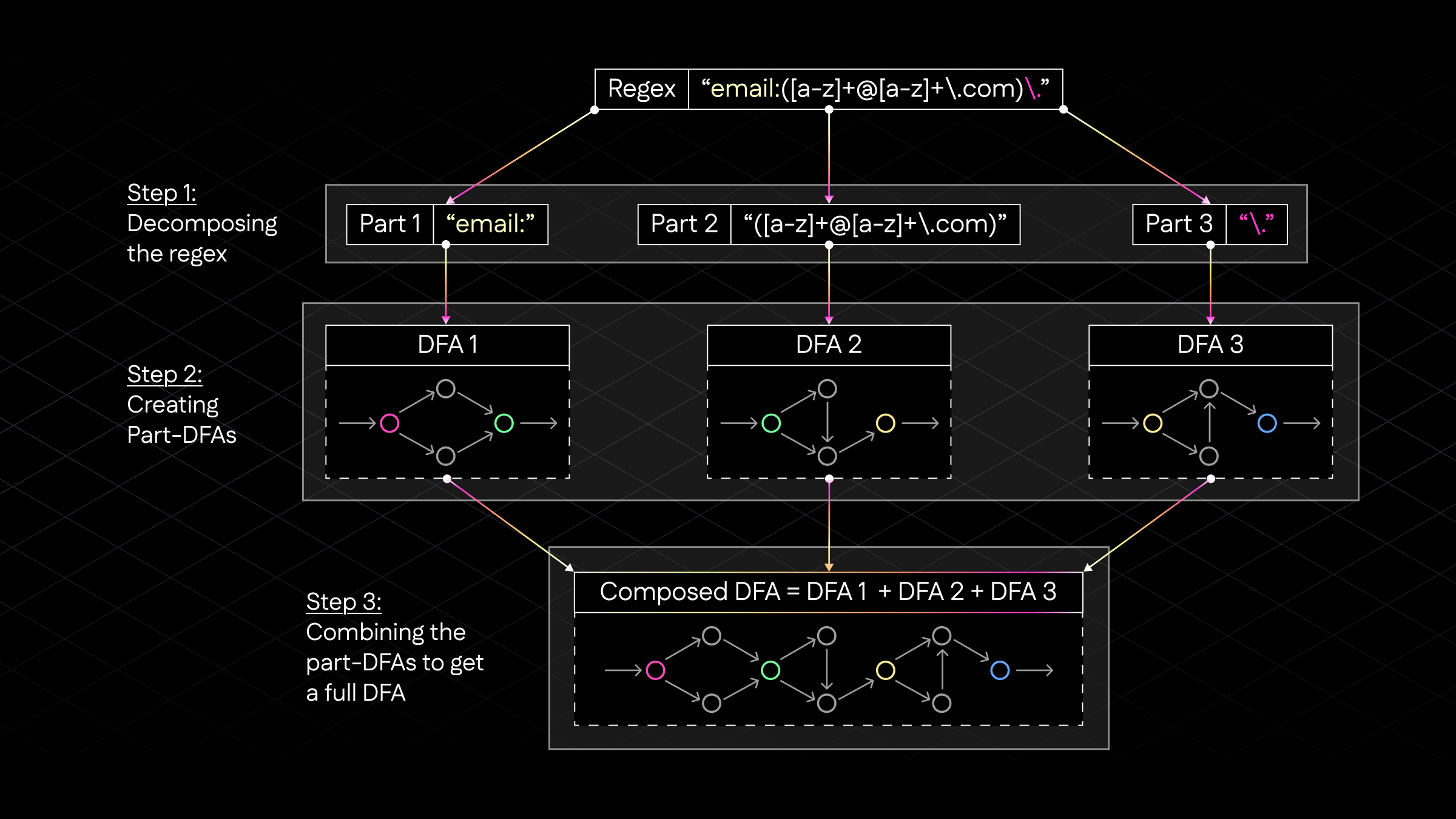

The zkML Blueprints GitHub repository offers optimized Circom circuits for ZK-ML tasks. This simplified template demonstrates matrix multiplication using row-column decomposition—computing each output element as the dot product of a row from the first matrix and a column from the second—and a logarithmic accumulator to sum products with logarithmic circuit depth.

pragma circom 2.1.6;

template Mul() {

signal input a;

signal input b;

signal output out;

out <== a * b;

}

template LogAccumulator(n) {

// Simplified logarithmic accumulator assuming n is power of 2

// Uses a binary tree of adders for O(log n) depth

signal input values[n];

signal output sum;

signal tree[2*n - 1];

// Leaf nodes

for (var i = 0; i < n; i++) {

tree[n - 1 + i] <== values[i];

}

// Internal nodes (bottom-up)

for (var i = n - 2; i >= 0; i--) {

tree[i] <== tree[2 * i + 1] + tree[2 * i + 2];

}

sum <== tree[0];

}

template OptimizedMatMul(m, k, n) {

// Simplified optimized matrix mul: C[i][j] = sum_l A[i][l] * B[l][j]

// Row i dotted with column j, accumulated logarithmically

signal input A[m*k];

signal input B[k*n];

signal output C[m*n];

component muls[m][n][k];

component accums[m][n];

for (var i = 0; i < m; i++) {

for (var j = 0; j < n; j++) {

accums[i][j] = LogAccumulator(k);

for (var l = 0; l < k; l++) {

muls[i][j][l] = Mul();

muls[i][j][l].a <== A[i*k + l];

muls[i][j][l].b <== B[l*n + j];

accums[i][j].values[l] <== muls[i][j][l].out;

}

C[i*n + j] <== accums[i][j].sum;

}

}

}

// Usage example: component main { public [A, B]; } = OptimizedMatMul(2,4,2);Flattening matrices simplifies indexing in Circom. The LogAccumulator uses a binary tree structure, reducing additive depth from O(k) to O(log k), which significantly improves proving efficiency for privacy-preserving machine learning inference while maintaining constraint optimality.

Adapt this in your circuit: declare inputs as private signals for matrices A and B, enforce dimensions via range proofs, then compute C

Convolutional blueprints shine in vision tasks. They model kernels as flattened vectors, applying strides and paddings with modular indexing. Constraints ensure no out-of-bounds access, a frequent ZK gotcha. For activations, polynomial fits for sigmoid use Taylor expansions truncated at degree 7, with error analysis proving sub-1% deviation across inputs. These choices reflect real trade-offs: higher fidelity means more multipliers, but the repo quantifies it all.

Real-World Impact and Community Momentum

Beyond tech specs, zk-ml circuits GitHub repos like this fuel innovation in DeFi, healthcare, and beyond. Picture zkML oracles feeding private credit scores into lending protocols, or verifiable drug discovery pipelines sharing proofs not data. Inference Labs' focus on modularity lets you stack blueprints into full models, say a ResNet-18 variant, with proofs compact enough for Ethereum blocks.

What's opinionated here: not every ZKML path needs full-model proving. For medium-scale apps, these primitives outperform end-to-end compilers by letting you optimize hotspots manually. In my advisory work, clients blending zkML with hybrid analysis see 3x faster iterations. The repo's call for contributions targets gaps like attention mechanisms, signaling a vibrant path ahead.

Challenges remain, sure. Fixed-point precision limits dynamic ranges, and proving times scale with model depth. Yet, with hardware accelerators and recursive proofs on the horizon, these blueprints position developers ahead of the curve. Dive in, experiment, contribute; the zkML ecosystem thrives on such shared rigor.

Ultimately, zkml-blueprints transforms abstract privacy ideals into deployable zero knowledge machine learning circuits. It equips you to build AI that computes without confessing, verifying without voyeuring. For developers eyeing the nexus of ML and blockchain, this repo isn't optional; it's foundational.