zkML for Privacy-Preserving AI Agents: Verifying Outputs Without Data Exposure

In an era where AI agents autonomously handle tasks from financial forecasting to personalized recommendations, the paramount concern remains data privacy. These intelligent systems process vast amounts of sensitive information, yet traditional setups expose users to risks of data leaks or model theft. Zero-knowledge machine learning, or zkML, emerges as a prudent solution, allowing verification of AI outputs without revealing underlying data or logic. As an investor attuned to long-cycle trends in secure computation, I see zkML not as hype, but as essential infrastructure for sustainable AI deployment.

The Privacy Imperative for Autonomous AI Agents

AI agents, designed for zkML AI agents applications, operate in decentralized environments, querying models on private datasets like commodity prices or bond yields. Without safeguards, inference exposes proprietary strategies. Consider a trading agent analyzing macroeconomic indicators; revealing inputs compromises competitive edges. zkML addresses this through private AI inference zk proofs, proving computations correct while keeping details concealed. This aligns with conservative principles: verify rigorously, disclose minimally.

Current frameworks falter here. Open inference leaks data; even encrypted channels invite side-channel attacks. zkML flips the script, embedding cryptographic assurances into the ML pipeline. Outputs become tamper-proof attestations, fostering trust in high-stakes sectors like finance and healthcare.

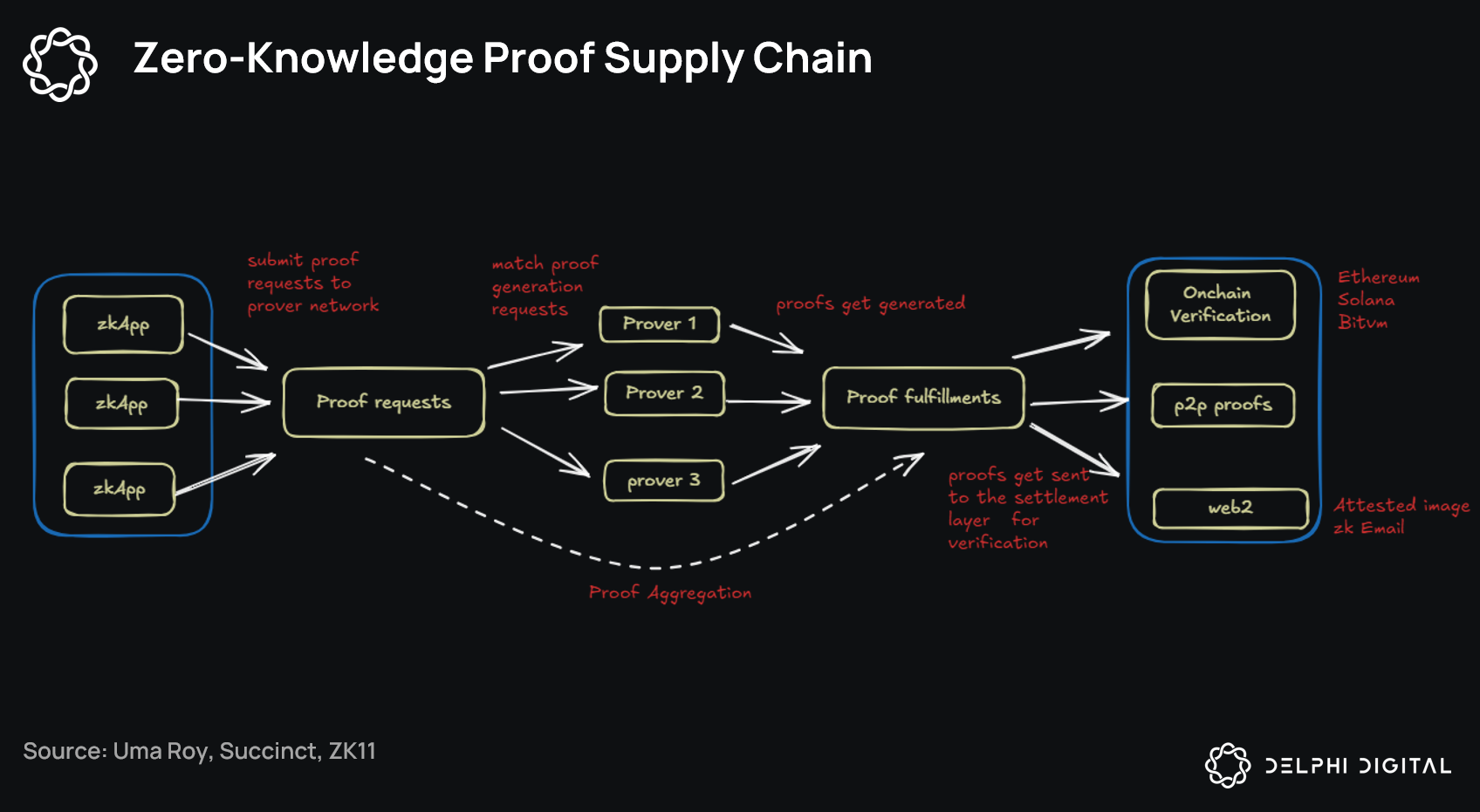

Zero-Knowledge Proofs: Enabling Verifiable Computation in ML

At its core, zero-knowledge machine learning privacy leverages zero-knowledge proofs (ZKPs), protocols where a prover convinces a verifier of a statement’s truth sans additional info. For ML, this means generating proofs during training or inference. A neural network’s forward pass, typically opaque, yields a succinct proof confirmable on-chain or off.

Take zkLLM for large language models: it tailors ZKPs to LLM architectures, proving responses derive from valid computations. In agent contexts, this ensures actions stem from accurate, private inferences. Skeptics question scalability, yet optimizations like recursive proofs shrink verification costs, making zkML verifiable computation feasible for real-time agents.

Key zkML Benefits

-

Data Confidentiality: zkML keeps private data hidden during AI training and inference, as in ARPA Network’s Verifiable AI Framework.

-

Model Protection: Proprietary model details stay concealed while proving correct outputs, per Polyhedra’s zkML platform.

-

On-Chain Verifiability: Proofs settle directly on blockchains like Mina Protocol for decentralized trust.

-

Tamper-Proof Outputs: Cryptographic proofs ensure AI results cannot be altered post-computation.

-

Scalable Proofs: Succinct ZKPs enable efficient verification even for complex ML models.

Pioneering Frameworks Driving zkML Adoption

Recent advancements solidify zkML’s trajectory. Mina Protocol’s February 2025 zkML library equips developers to generate proofs from AI models, settling them on its succinct blockchain. Ideal for agents needing lightweight verification, it supports decentralized apps without privacy trade-offs.

ARPA Network’s October 2025 verifiable AI framework uses ZKPs for secure outputs, proving correctness sans data or logic exposure. Polyhedra’s platform generates ML inferences with execution proofs, perfect for trustless agents in Web3. Bionetta by Distributed Lab offers client-side private inference, keeping user inputs hidden even with public models – think biometric auth for agents.

These tools converge on a vision: privacy-preserving ML agents that operate verifiably across chains. From Ethereum attestations wrapped in ZKPs to custom LLMs, zkML redefines agent autonomy. Investors like myself value this for macroeconomic models; private bond yield predictions verified publicly build resilient portfolios.

These innovations signal a maturing ecosystem where zkML AI agents can thrive without the vulnerabilities plaguing centralized AI. Yet, adoption hinges on practical integration, balancing proof generation overhead with agent responsiveness. From my vantage in macroeconomic modeling, zkML’s value lies in its ability to secure long-cycle forecasts, such as commodity supply chains or yield curve shifts, against data poaching.

Real-World Applications: zkML in Action

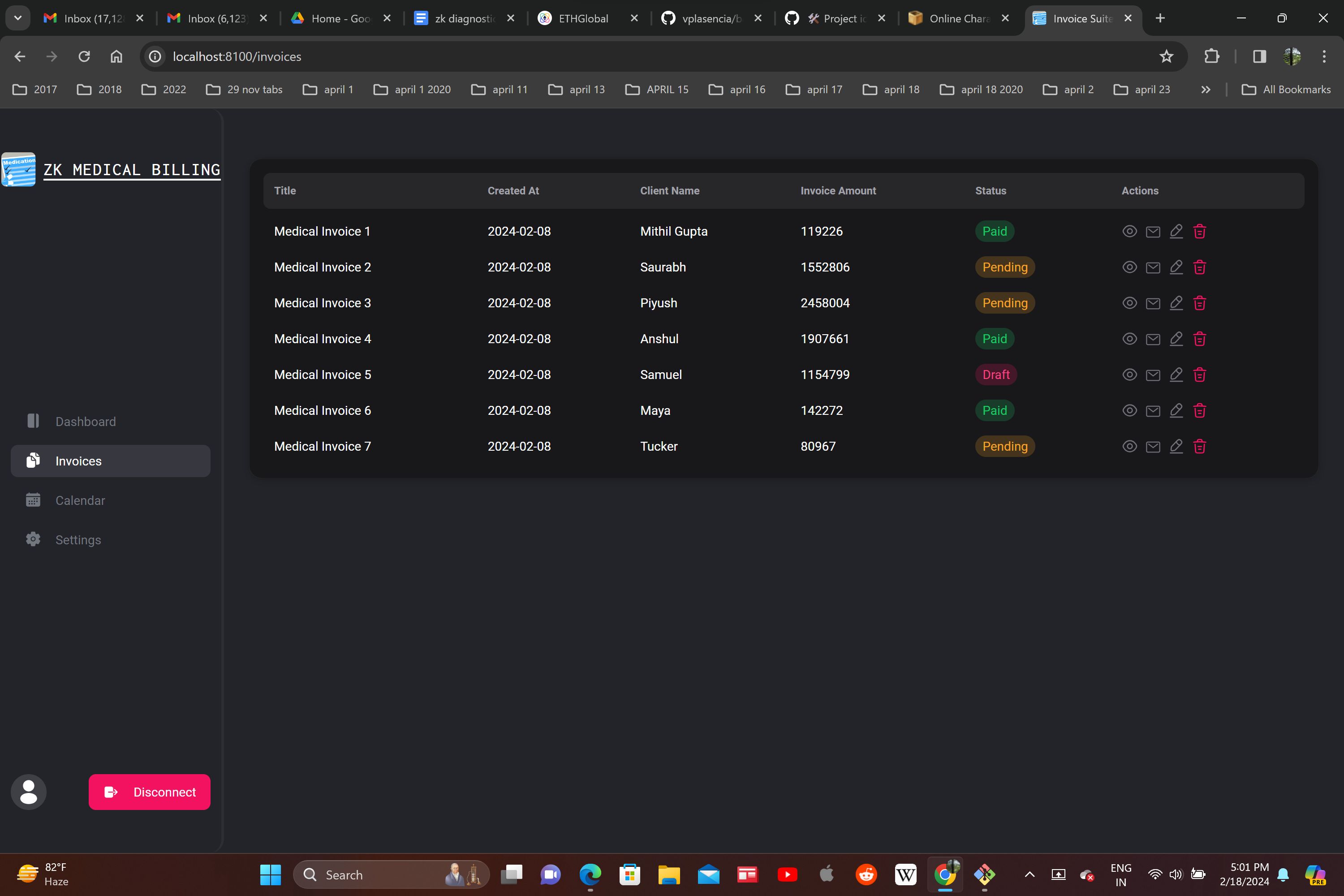

Imagine a decentralized trading agent sifting through private bond portfolios to recommend allocations. With private AI inference zk proofs, it generates an output – say, a predicted yield adjustment – accompanied by a ZKP. Verifiers confirm the math without glimpsing portfolio details or model weights. This setup suits conservative strategies, prioritizing verifiable stability over flashy gains.

In healthcare, agents could analyze patient data for diagnostics, proving inferences align with trained models sans exposing records. Polyhedra’s platform shines here, enabling such proofs for on-chain settlement. Bionetta extends this to client-side, where agents handle biometrics privately, outputting verified identities for Web3 access. Such use cases underscore zkML’s role in fostering accountable autonomy.

Overcoming Scalability Hurdles: Practical zkML for Agents

Critics highlight proof computation costs, especially for large models. Recursive SNARKs and hardware acceleration mitigate this; zkLLM demonstrates proofs for LLMs in minutes, viable for agent loops. For zkML verifiable computation, optimizations like field-optimized arithmetic reduce latency, aligning with real-time needs in volatile markets.

Consider Ethereum integration: ICME’s trustless agents wrap attestations in succinct ZKPs for mainnet verification. This enables cross-chain agents, querying private oracles with public proofs. As an investor, I appreciate how this fortifies portfolios against black-box risks, ensuring bond models reflect true macro signals.

The Investment Case for zkML Infrastructure

From commodities to fixed income, zkML equips agents for privacy-first forecasting. Public verification of private models unlocks collaborative intelligence; think syndicated macro agents pooling insights sans data spill. ARPA and Mina pave this, their frameworks signaling ecosystem depth.

Risks linger – proof universality lags for arbitrary nets – yet momentum builds. zkML shifts AI from opaque oracles to auditable engines, vital for sustainable growth. In my practice, integrating these proofs into yield models yields resilient edges, verifiable across stakeholders. As adoption scales, privacy-preserving ML agents will underpin trustless economies, rewarding patient builders over speculators.

Stakeholders must prioritize rigorous auditing and interoperability. The trajectory points toward ubiquitous zkML, where agents operate with cryptographic integrity. This not only safeguards data but elevates AI’s role in prudent decision-making, a cornerstone for enduring value.