zkML Private Verifiable Memory for AI Agents Explained

In the high-stakes world of AI agents handling sensitive trading data or personalized financial strategies, memory isn’t just storage; it’s a vault of proprietary insights that demands ironclad privacy and verifiability. Enter zkML private verifiable memory, a game-changer fusing zero-knowledge proofs with machine learning to let AI agents recall and process data without exposing a whisper of the underlying secrets. As someone who’s blended zkML into DeFi portfolios for years, I’ve seen how this tech turns opaque agent behaviors into transparent, trustworthy operations, all while shielding zero knowledge AI privacy.

Picture an AI agent in a decentralized trading bot: it pulls from historical positions, user preferences, and real-time signals to recommend moves. Traditional setups leak data to clouds or require blind trust in the agent’s black box. zkML flips this by generating proofs that confirm memory access and computations happened correctly, without revealing contents. This verifiable computation zkML isn’t theoretical; it’s powering agents that collaborate across chains without rug-pull risks.

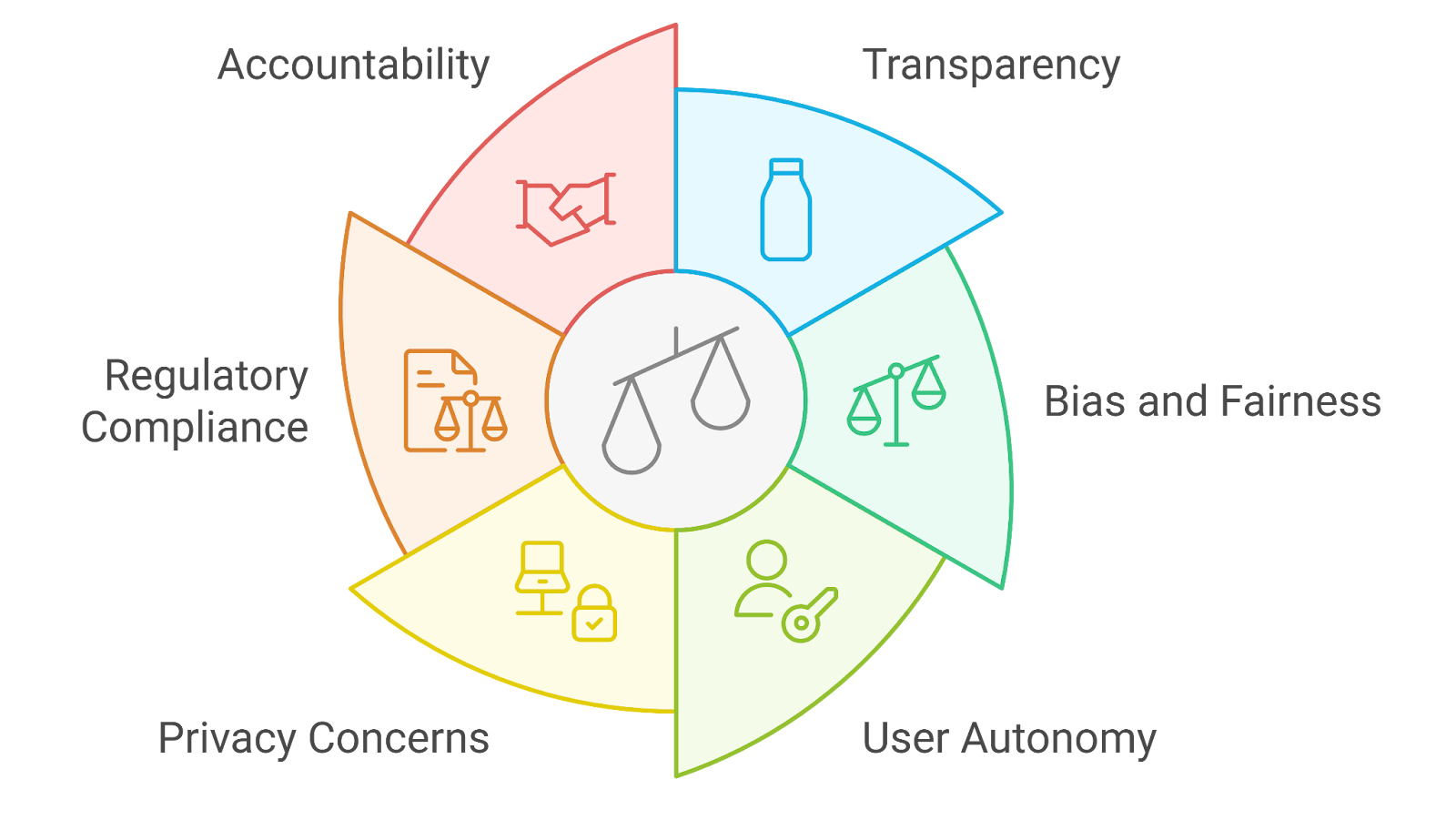

Why Memory Management Breaks AI Agents Today

AI agents thrive on memory – episodic logs of past actions, vector embeddings of strategies, long-term state for multi-step reasoning. Yet, in privacy-sensitive realms like finance or healthcare, sharing this memory invites breaches. Agents can’t personalize without risking data sovereignty, and verification often means full disclosure. I’ve debated this in zkmlai. org forums: developers building private AI agents zkML struggle with memory consistency, where agents drift due to unverified state updates.

Key AI Agent Memory Challenges

-

Data Leakage Risks: Sensitive data exposure during memory access in multi-agent interactions, despite privacy layers like ZKPs.

-

Unverified State Mutations: Difficulty ensuring memory state changes are legitimate without revealing private computations, as in zkML inference.

-

Scalability Bottlenecks: Privacy layers like zero-knowledge proofs slow down operations, e.g., ‘swimming through concrete’ in ZKML inference.

-

Cross-Agent Trust Gaps: Lack of verifiable collaboration between agents without trusted intermediaries, addressed by frameworks like MemTrust.

-

Computational Overhead: High costs from naive encryption in verifiable AI memory, mitigated by optimized systems like Jolt Atlas.

These pain points compound in multi-agent systems, where one agent’s memory influences another’s decisions. Without cryptographic anchors, it’s a house of cards – efficient but fragile. zkML steps in as the structural steel, proving memory integrity at scale.

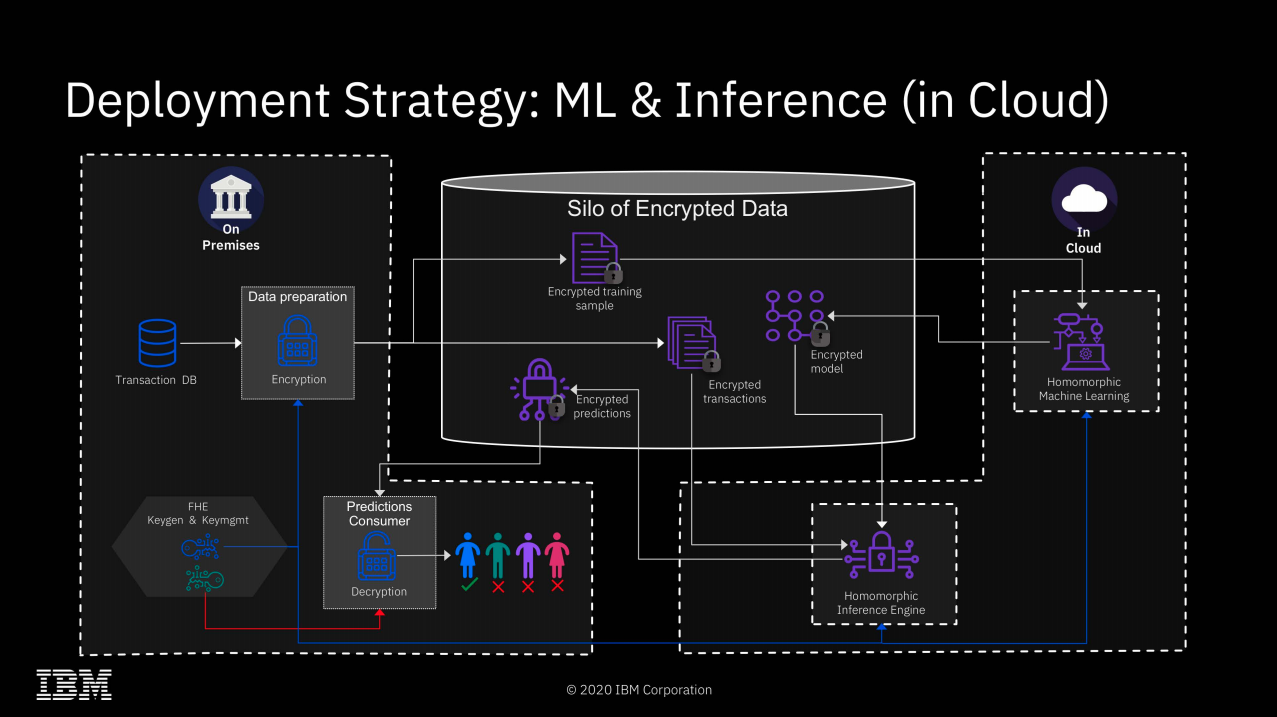

zkML’s Core Mechanics for Verifiable Memory

At heart, zkML compiles ML models – think neural nets in ONNX format – into arithmetic circuits provable via zero-knowledge schemes like Groth16 or PlonK. For memory, this extends to tensors and state vectors: Jolt Atlas, fresh from February 2026, tackles this head-on. Its lookup-centric proving for ONNX operations verifies memory consistency without bloating proofs. Non-linear activations? Handled natively. Memory-constrained? Optimized for it. In my hybrid analysis workflows, this means agents prove portfolio simulations privately, letting me verify without peeking at positions.

MemTrust complements with a zero-trust stack for unified AI memory. Layered TEE protections ensure sovereignty across short-term caches and long-term archives. Cryptographic guarantees mean agents collaborate – say, one forecasts volatility, another executes trades – sans data exposure. No more trusting cloud middlemen; proofs settle disputes on-chain.

Real-World zkML Memory Innovations Accelerating Adoption

Mina Protocol’s zkML library democratizes this: snag private inputs, run inference, spit out a proof verifiable on their succinct chain. Developers convert models to circuits effortlessly, ideal for zkML AI agent memory in mobile trading apps. Then there’s Allora and Polyhedra’s tag-team: model fingerprinting via EXPchain hashes ensures tamper-proof integrity. Agents stay ‘unruggable, ‘ as one DEV post nailed it, with verifiable outputs across web3 ecosystems.

These aren’t silos; they’re stackable. Jolt for inference proofs, MemTrust for memory layers, Mina for deployment. In forums, we’re prototyping agent swarms where memory proofs enable emergent intelligence – think collective alpha generation without IP leaks. The overhead? Dropping fast, thanks to lookup args and recursion.

Proving it in practice starts simple: load your agent’s memory state as tensors, compile to a zkML circuit, execute inference, and generate the proof. Overhead shrinks to minutes on consumer hardware, verifiable in seconds on-chain. This stack turns solo agents into verifiable networks, where memory proofs bootstrap trustless collaboration.

Hands-On: Proving Agent Memory with zkML

Let’s get tactical. In a trading agent, memory holds vectorized position histories and risk embeddings. Using Mina’s zkML library, you convert an ONNX model reading this memory to a circuit. Run private inference – say, predicting drawdown from past trades – and output a proof attesting: “I used exactly this state, computed correctly, no leaks. ” Verifiers check the proof sans data. I’ve wired this into my DeFi bots; it flags anomalous memory drifts before they tank portfolios.

Why zkML Verifiable Memory Wins in DeFi and Beyond

For hybrid analysts like me, fusing fundamentals with zkML-enhanced technicals, this is portfolio rocket fuel. Agents maintain zkML AI agent memory of user-specific strategies – high-conviction longs, delta-neutral hedges – proving simulations match reality privately. Medium-risk tolerance? Proofs quantify edge without exposing signals, enabling on-chain syndicates where agents pool alpha via memory attestations. In healthcare analogs, patient histories stay sovereign; proofs confirm personalized diagnoses.

Traditional vs zkML Memory Systems for AI Agents

| Aspect | Traditional Memory | zkML Memory |

|---|---|---|

| Privacy | Leak-prone: Sensitive data exposed in cloud storage ❌ | Private: Zero-knowledge proofs hide inputs/model details 🔒 |

| Verifiability | Unverified: No proof of correctness without revealing data ❌ | Verifiable: ZK proofs confirm integrity of computations ✅ |

| Scalability | Limited by centralized infrastructure | Scalable: Frameworks like Jolt Atlas for memory-constrained envs 📈 |

| Security Model | Vulnerable to breaches/tampering | Zero-trust: MemTrust with TEE protection 🛡️ |

| Memory Consistency | Manual checks, error-prone | Cryptographic verification of tensor ops (Jolt Atlas) 🔄 |

| Cross-Agent Collaboration | Data exposure to intermediaries | Secure sharing without revealing info 🤝 |

| Key Examples | – | Mina zkML Library, Polyhedra zkML, MemTrust |

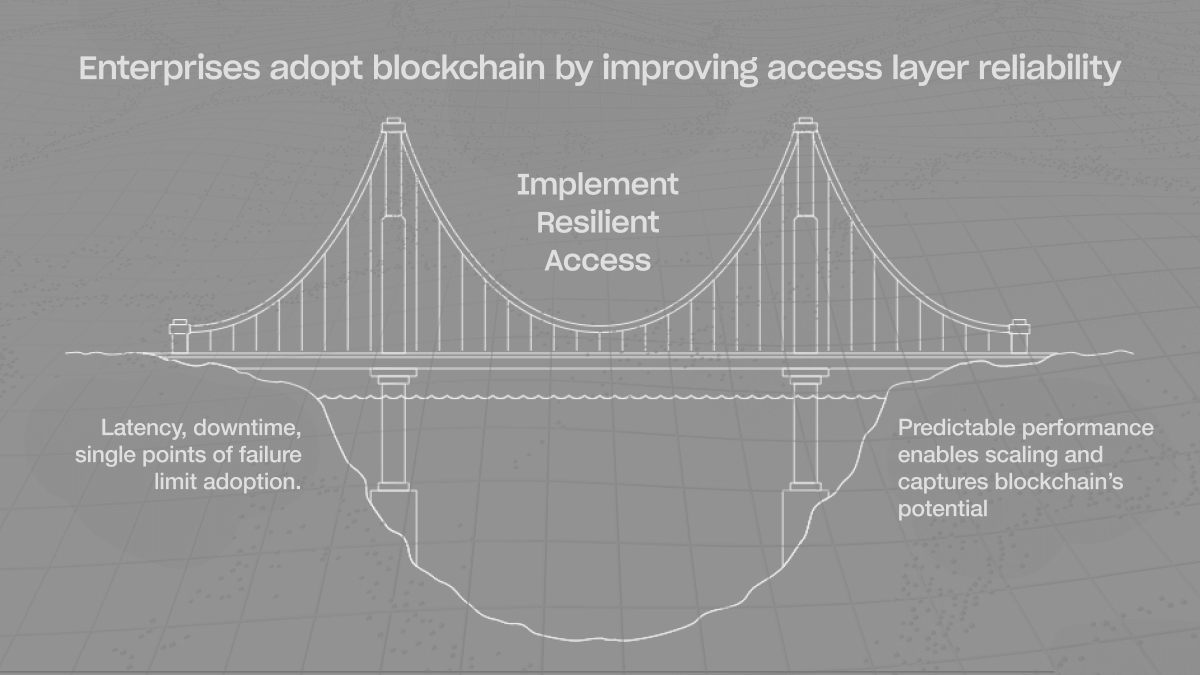

Cross-industry ripple: web3 DAOs verify contributor agents’ decision logs; finance firms audit without NDAs. Overhead critiques? Fair, but 2026 optimizations – Jolt’s memory-centric proofs clock under 10x slowdowns versus naive ZK. Forums buzz with recursion tricks pushing real-time viability. We’re not swimming through concrete anymore; it’s streamlined currents.

Challenges linger: circuit compilation times for massive models, proof aggregation for agent swarms. Yet, collaborative tinkering at zkmlai. org accelerates fixes – community circuits for common memory ops, shared lookup tables. Dip in; prototype your first private AI agents zkML memory vault. The edge goes to those verifying first, leaking last. As zkML matures, expect agent memory to underpin trillion-dollar trust machines, where privacy isn’t a feature, it’s the foundation.