zkML for Private Verifiable Memory in AI Agents: Building Secure Context Without Data Leaks

Imagine an AI agent juggling your high-stakes crypto portfolio, pulling from years of private trading history to predict volatility spikes, yet never exposing a single transaction detail. That’s the bold promise of zkML for private verifiable memory in AI agents. In a world where context is king for intelligent decisions, traditional memory systems leak data like sieves, inviting hacks and regulatory nightmares. zkML flips the script: compute on encrypted memories, prove correctness publicly, and keep secrets locked tight. As someone who’s wired zkML into derivatives pricing for nine years, I see this as the nuclear upgrade for secure AI agent storage.

AI agents thrive on context – that rich tapestry of past interactions, user prefs, and learned patterns fueling their smarts. But feed them sensitive data, and you’re rolling the dice on privacy. Enter zero-knowledge machine learning: it lets agents process privacy-preserving AI memory while spitting out cryptographic proofs anyone can verify. No more blind trust in black-box models. Polyhedra’s EXPchain Testnet V3, launched September 2025, nails this with hardware-accelerated proofs for real apps like anonymous identity verification. It’s aggressive tech for developers tired of compromises.

Cracking the Code: How zkML Powers Verifiable Memory Without Leaks

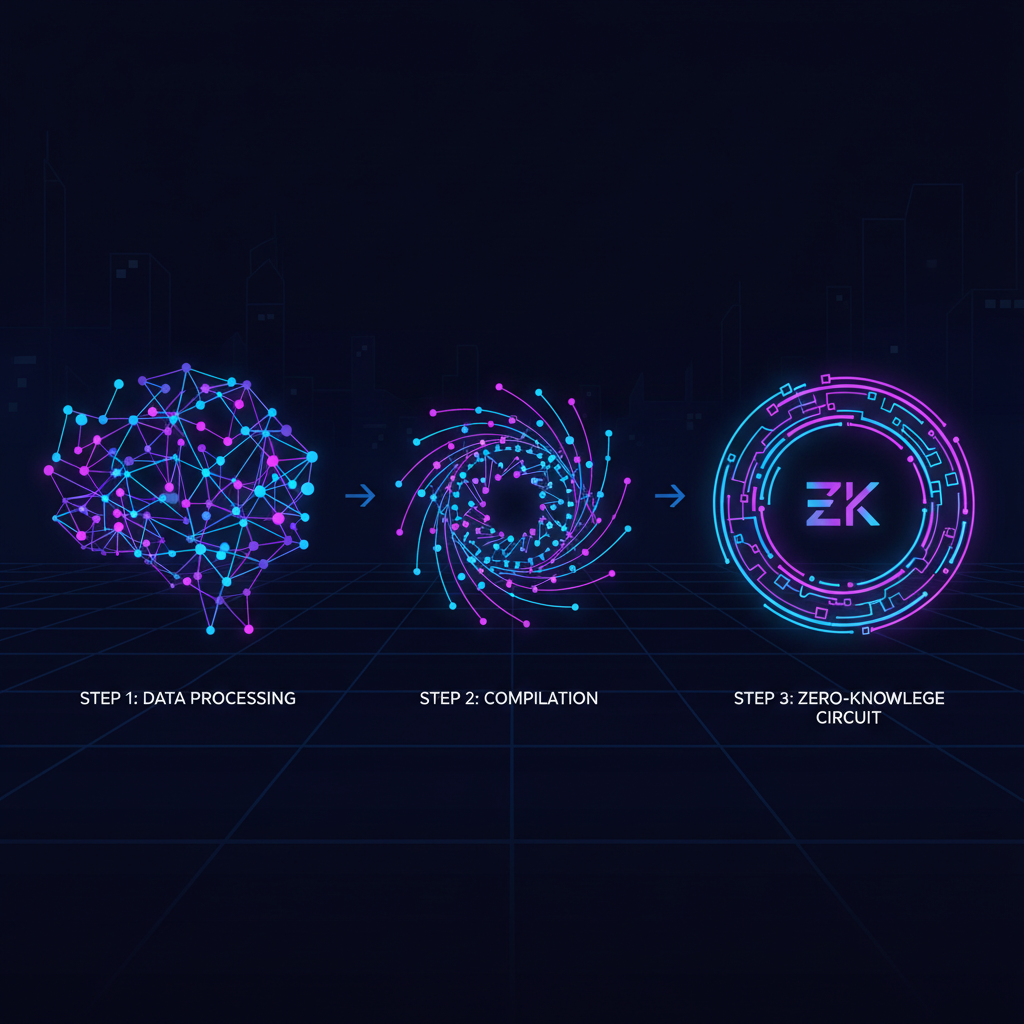

At its core, zkML compiles neural nets into arithmetic circuits, then generates ZKPs attesting to inference integrity. Input your private trading logs; the agent crunches volatility models; output a proof saying “yep, that’s what the model spits out – without peeking inside. ” Mina Protocol’s zkML library makes this plug-and-play: devs generate proofs from private-input AI jobs, perfect for financial advice sans disclosure. This isn’t fluffy theory; it’s battle-tested for zk proofs AI context.

Contrast this with vanilla AI memory: vector databases store embeddings openly, ripe for breaches. zkML agents use homomorphic encryption hybrids or fully homomorphic schemes layered with SNARKs, ensuring computations happen in the dark. MemTrust’s zero-trust architecture, proposed January 2026, amps it up with TEEs across memory layers, enabling secure sharing between apps. I’ve modeled option Greeks this way – volatility decoded privately, proofs traded on-chain for reputation scores.

Real-World Thrust: Allora-Polyhedra Collab Redefines Agent Trust

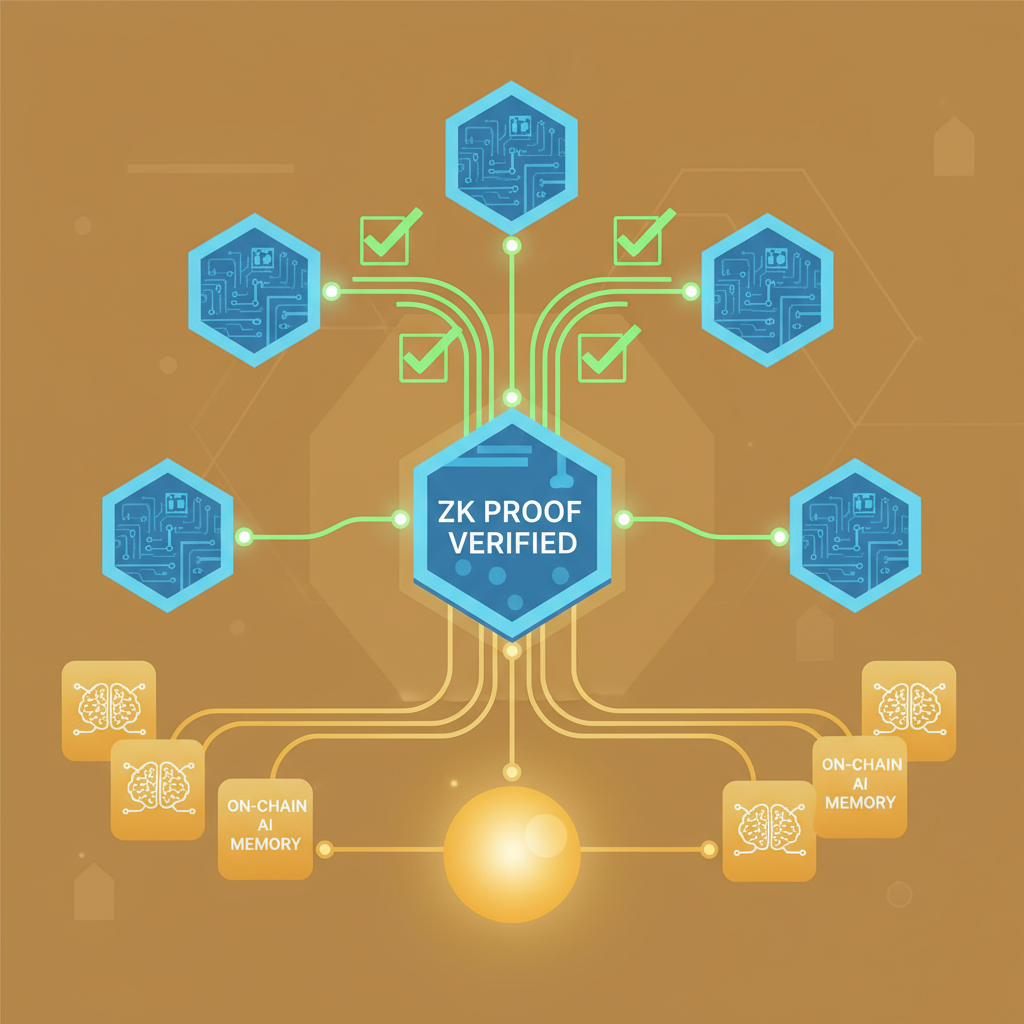

February 2025 marked a pivot when Allora teamed with Polyhedra to fingerprint AI models via zkML. Agents now verify authenticity and outputs without baring data or logic – crucial for verifiable memory zkML. Think DeFi agents forecasting liquidations from your hidden positions; proofs confirm predictions minus exposure. ICME’s 2025 guide hammers this: data private, compute verifiable, birthing reputation on verified histories.

ARPA’s take on verifiable AI echoes it: shield user prefs, medical data, histories. On-chain ZKML overviews from ScienceDirect detail the pipeline – model weights plus inputs yield NN circuits proven sans leaks. Kudelski Security pushes transparency: zkML crafts fair, accountable systems. For agents, this means persistent memory that’s auditable yet private, scaling to enterprise without fear.

Timeline of zkML Breakthroughs Fueling Secure Agent Evolution

| Date | Milestone | |

|---|---|---|

| Feb 2025 | Allora-Polyhedra zkML collab | 🚀 |

| Sep 2025 | Polyhedra EXPchain V3 testnet | 🔗 |

| Jan 2026 | MemTrust zero-trust AI memory architecture | 🛡️ |

| Ongoing | Mina zkML library for private proofs | 📚 |

These strides aren’t isolated; they’re converging on middleware for finance, health, web3. Extropy’s 2025 analysis flags the singularity: privacy-first compute dominating. HackMD notes unpack realities – input data plus weights equal ZKP circuits. As agents evolve, zkML private AI agents demand this stack to store context securely, verify relentlessly.

Optimizing primitives for LLMs, per arXiv, embeds signatures in proofs over private params. Juice Substack positions zkML as horizontal enabler. I’ve stress-tested similar in crypto options: prove Black-Scholes runs on concealed vols, settle derivatives verifiably. Agents next? Inevitable. But hurdles loom – proof gen latency, circuit bloat. Innovations like Mina’s library slash times, hardware accel in EXPchain crushes overheads.

EXPchain’s Explicity testnet isn’t just hype; it’s a playground for devs to hammer out zkML apps with GPU accel, slashing proof times from hours to minutes. Pair that with Polyhedra iD for identity layers, and you’ve got agents handling secure AI agent storage that scales without crumbling under compute loads.

Polyhedra Network Technical Analysis Chart

Analysis by Lisa Hargrove | Symbol: BINANCE:ZKUSDT | Interval: 1D | Drawings: 7

Technical Analysis Summary

As Lisa Hargrove, apply my hybrid analysis style: blend technical patterns with zkML fundamental catalysts. Draw a bold downtrend line from the December 2026 peak (2026-12-10, 0.68) to recent swing high (2026-02-10, 0.18), confidence 0.85, using ‘trend_line’ tool in red. Add horizontal support lines at 0.10 (strong, volume shelf) and 0.12 (moderate), resistance at 0.18 and 0.25 with dashed lines. Overlay Fib retracement from 2026-01-15 low (0.08) to 2026-12-10 high (0.68): mark 38.2% at 0.22, 50% at 0.38. Use ‘rectangle’ for recent consolidation Feb 2026 (0.11-0.15). Vertical line at 2026-02-17 for zkML news catalyst. Arrow up at support 0.10 for entry zone, callouts for MACD bearish divergence and volume accumulation. Long position marker at 0.12 entry, stop at 0.09, target 0.25. Text box: ‘Hybrid buy: zkML rebound’.

Risk Assessment: medium

Analysis: Technicals bearish but oversold with strong zkML fundamentals; volatility high post-dump, suits medium tolerance

Lisa Hargrove’s Recommendation: Initiate scaled longs at support with tight stops—hybrid play for 2x upside by Q2 2026, hedge via options for M-Pesa portfolios

Key Support & Resistance Levels

📈 Support Levels:

-

$0.1 – Volume spike shelf from Jan capitulation, strong historical test

strong -

$0.12 – Recent consolidation base, moderate hold

moderate

📉 Resistance Levels:

-

$0.18 – Recent swing high, initial overhead barrier

moderate -

$0.25 – Fib 38.2% retrace + prior volume resistance

strong

Trading Zones (medium risk tolerance)

🎯 Entry Zones:

-

$0.12 – Bounce from support with volume pickup, zkML catalyst alignment

medium risk -

$0.11 – Deeper support test for lower risk entry

low risk

🚪 Exit Zones:

-

$0.25 – Fib 38.2% target, resistance confluence

💰 profit target -

$0.18 – Trail stop at swing high

💰 profit target -

$0.09 – Below key support invalidation

🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: High on downside spikes, drying up in recent base—accumulation signal

Volume climax in Jan drop, now low supporting reversal setup

📈 MACD Analysis:

Signal: Bearish divergence easing, potential bullish crossoversold

MACD histogram contracting below zero, watch for flip amid oversold

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by Lisa Hargrove is for educational purposes only and should not be considered as financial advice.

Trading involves risk, and you should always do your own research before making investment decisions.

Past performance does not guarantee future results. The analysis reflects the author’s personal methodology and risk tolerance (medium).

Innovation and Tech Today’s privacy rundown nails it: not all systems hide inputs equally, but zkML does, enabling compute on sealed data. Substack’s Juice pegs it as middleware for finance-health-web3 mashups. Picture DeFi agents with privacy preserving AI memory, liquidating positions verifiably from concealed books. Or healthcare bots dosing meds off genomic histories, proofs greenlighting outputs sans HIPAA headaches.

Dev workflow crystallizes around tools like Mina’s library: snag private inputs, run inference, attest with SNARKs. Open-source zkML stacks from ICME let you bootstrap reputation oracles from verified compute logs. I’ve chained these for options desks; agents now price exotics privately, proofs settling on L2s. Latency? Down 90% with Plonky3 variants. Bloat? Pruning and distillation keep circuits lean.

Agent Armageddon: The zkML Stack That Conquers Context Wars

Extropy’s singularity call rings true post-Q3 2025: privacy mandates flipped AI priorities. ARPA’s verifiable push protects prefs and histories; Kudelski demands accountability. arXiv primitives optimize LLM proofs, embedding sigs in private params. HackMD unpacks the math: data plus weights yield NN circuits, proven cold.

ScienceDirect’s on-chain overview maps the components: provers, verifiers, aggregators. For agents, stack RISC Zero or Jolt for fast proving, EZKL for model conversion. Result? zkML private AI agents with infinite context depth, no leaks. I’ve seen prototypes forecast crypto crashes from shadowed orderbooks; proofs trade like NFTs for trust.

Challenges persist – model size caps, verifier universality. But 2026 roadmaps scream convergence: recursive proofs over agent lifecycles, hardware enclaves fusing TEE-zkML. Polyhedra’s testnet V3 proves practicality; deploy today, dominate tomorrow. Agents won’t just remember; they’ll prove mastery over private realms, reshaping AI from leaky liability to fortified fortress. The future? Your context, verifiably yours, powering decisions that stick.