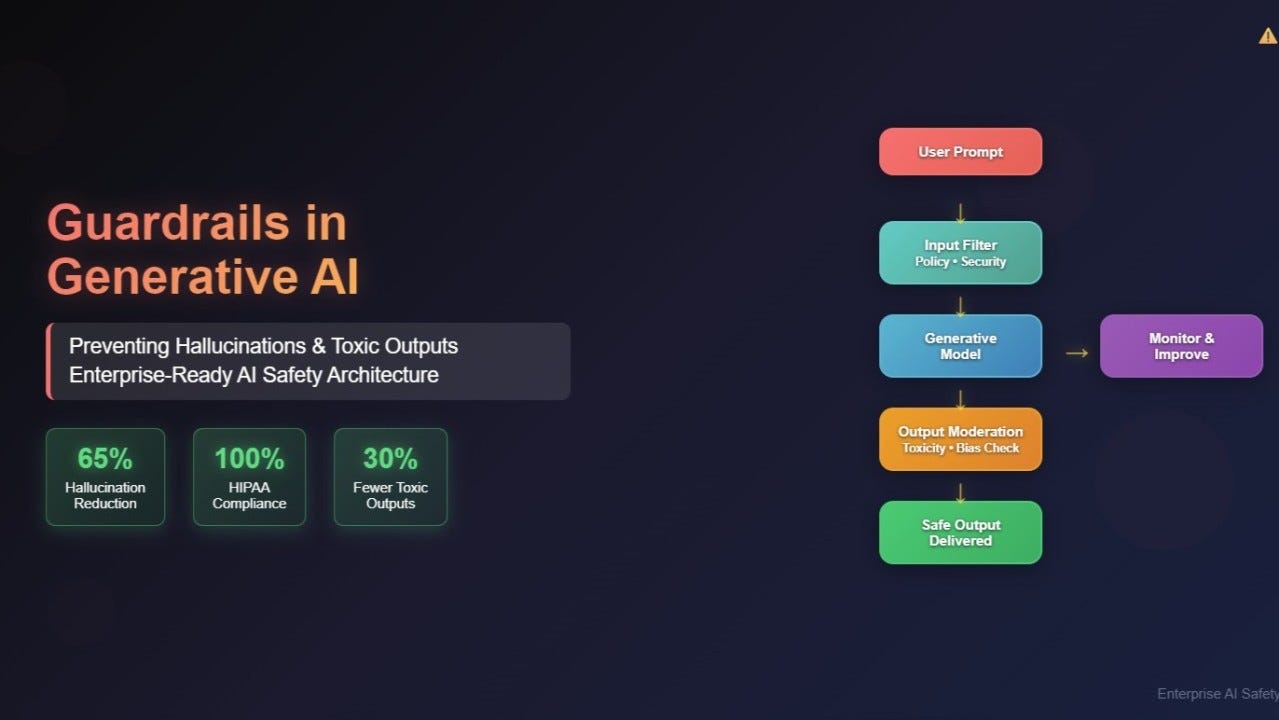

Mira Network Multi-Model Consensus Using zkML for Reliable AI Outputs

In an era where AI outputs increasingly influence critical decisions across finance, healthcare, and governance, the specter of hallucinations and biases undermines confidence. Mira Network addresses this head-on through its innovative multi-model consensus mechanism, augmented by zero-knowledge machine learning (zkML). This decentralized protocol cross-verifies AI responses using diverse, independent models, ensuring outputs are not only accurate but cryptographically verifiable. As a proponent of privacy-preserving technologies, I view Mira’s fusion of multi-model AI zkML with blockchain as a conservative bulwark against the volatility of unverified intelligence.

Mira Network operates as a trust layer for AI, leveraging models like GPT-4o, Llama 3.3, and DeepSeek-R1 to scrutinize claims rigorously. Each output must achieve consensus across these systems, applying domain-specific thresholds to filter inconsistencies. This process, rooted in decentralized AI verification, hashes results onto blockchains such as Ethereum and BNB Chain, rendering them immutable and transparent. From a risk management perspective, this setup mirrors the diversification principles I advocate in portfolio construction: spreading verification across uncorrelated models minimizes single-point failures.

Dissecting Mira’s Multi-Model Consensus Engine

At its core, Mira’s consensus requires three independent AI models to align on a given query, a threshold dubbed “3/3 consensus. ” Sources highlight GPT-4o, Claude 3.5 Sonnet, and LLaMA 3.3 70B Instruct as exemplars, though the updated architecture incorporates Llama 3.3 and DeepSeek-R1 for broader coverage. This ensemble approach combats hallucinations by cross-pollinating perspectives; if one model falters, others correct the course. VeriLLM research underscores Mira’s use of domain-specific thresholds, tailoring verification to contexts like factual claims or logical reasoning.

Benefits of Mira Multi-Model Consensus

-

Reduced AI Hallucinations: Cross-verification by independent models like GPT-4o, Llama 3.3, and DeepSeek-R1 minimizes factual errors.

-

Enhanced Reliability: Achieves trustworthy outputs without retraining models, using domain-specific thresholds and zkML proofs.

-

Decentralized Trust: Verification outcomes hashed and anchored on blockchains like Ethereum and BNB Chain for tamper-proof records.

-

Scalable for AI Apps: Supports autonomous applications via Mira Verify API and apps like Klok, with hybrid PoW/PoS incentives.

Conservatively speaking, this mechanism sidesteps the pitfalls of monolithic AI reliance. Traditional single-model systems, prone to systematic errors, pale against Mira’s distributed verifiers. Nodes stake tokens under a hybrid Proof-of-Work and Proof-of-Stake system, incentivizing honesty: rewards for accurate consensus, slashing for malice. This crypto-economic alignment fosters long-term network stability, much like stake in quality equities yields compounded returns.

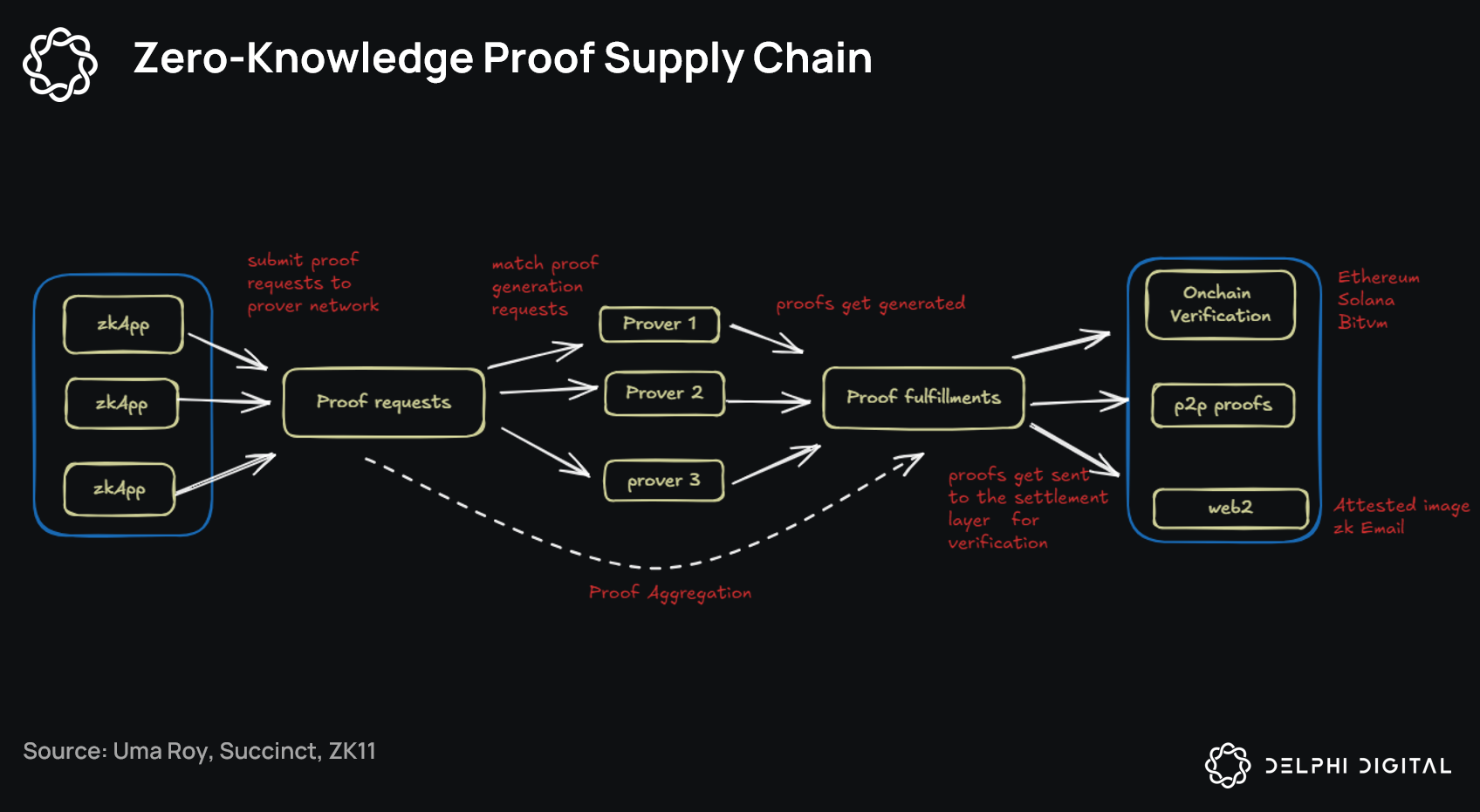

zkML Integration: The Privacy Powerhouse

Mira elevates its protocol by weaving in zkML, enabling zero-knowledge proofs for AI inferences without exposing sensitive inputs. Drawing from Mina Protocol’s zkML library, developers generate proofs attesting to model execution fidelity privately. This is pivotal for applications handling confidential data, such as financial analysis or medical diagnostics, where I specialize. zkML ensures verifiability sans revelation, aligning with my emphasis on data sovereignty in decentralized environments.

Imagine querying proprietary datasets through Mira Verify API: outputs verified via multi-model consensus, proven via zkML, and logged on-chain. No human oversight required, yet tamper-proof assurance persists. Ambient’s Proof-of-Logits complements this, but Mira’s zkML focus provides succinct, scalable proofs. In my view, this conservative integration mitigates AI’s black-box risks, offering investors a verifiable edge in privacy-preserving models.

Ecosystem Momentum and Practical Deployments

Mira’s Klok app exemplifies real-world utility, delivering a multi-model chat interface where users earn Mira Points toward $MIRA tokens post-TGE in Q3 2025. With $9 million seed funding and a $10 million builder fund, the network scales ambitiously. Mainnet launch will unlock staking and governance, embedding tokens in verification economics. Messari notes Mira’s edge in reducing hallucinations sans retraining, positioning it as infrastructure for trustworthy AI.

Blocmates describes Mira as an on-chain protocol with diverse node verifiers, echoing PANews on its trust layer against biases. For developers, the API builds autonomous agents; for researchers, zkML tools unlock novel proofs. This trajectory suggests Mira Network zkML consensus could redefine decentralized AI verification, rewarding patient builders over hype-driven ventures.