zkML Guardrails for Preventing AI Agent Data Leaks Using NovaNet ZKP

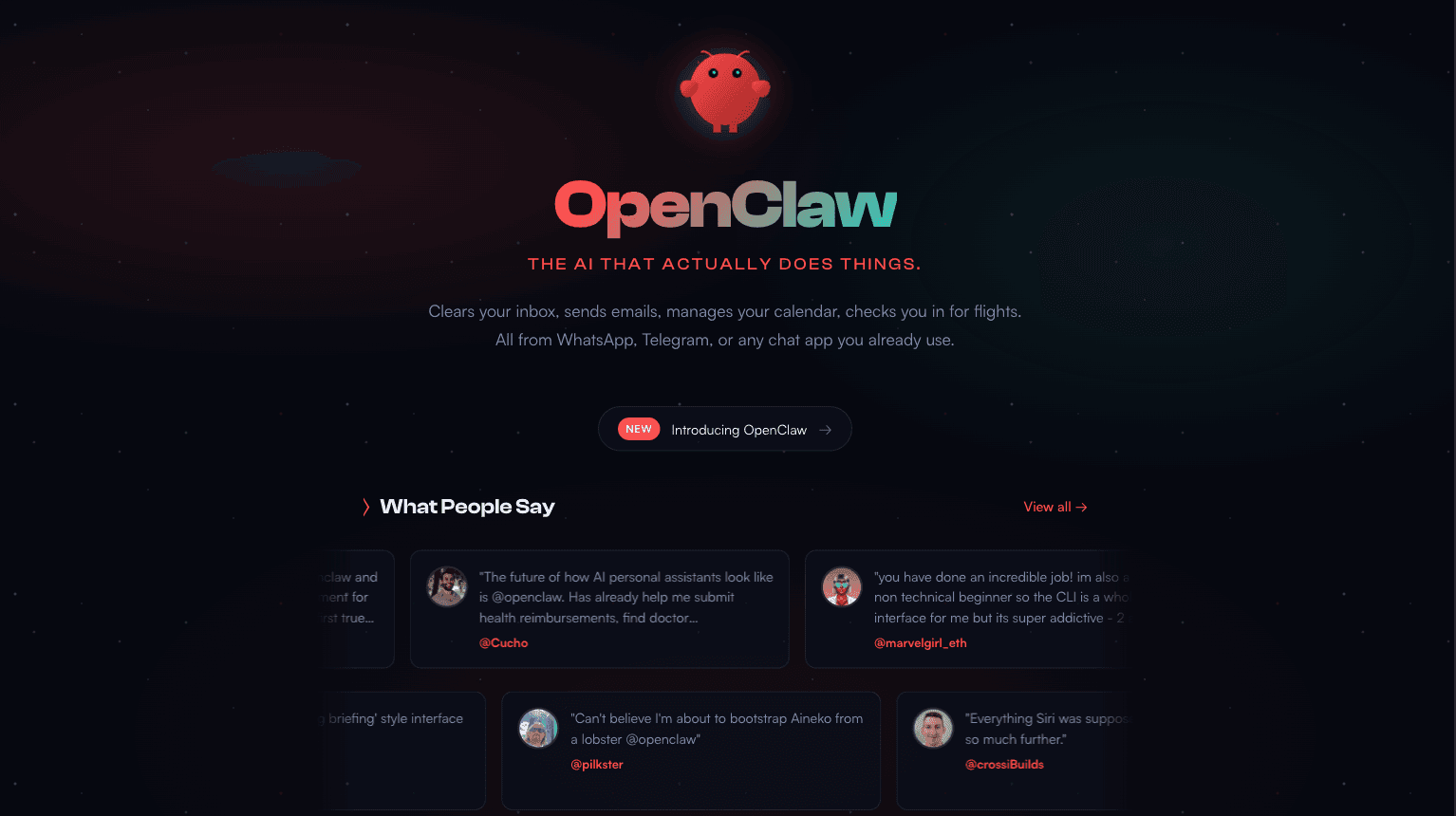

In the evolving landscape of autonomous AI agents, the specter of data leaks looms large, threatening the integrity of operations from financial transactions to personal data management. As systems like OpenClaw gain traction with their self-hosted capabilities for handling digital tasks via messaging platforms, the demand for robust zkML guardrails has never been more pressing. NovaNet ZKP emerges as a pivotal innovation, fusing zero-knowledge proofs with machine learning to forge tamperproof barriers that safeguard sensitive information without sacrificing functionality. This reflective exploration delves into how such mechanisms can redefine AI agent security, ensuring agents operate verifiably within predefined bounds.

The Vulnerabilities of Unfettered AI Agents

Consider the trajectory of AI agents: OpenClaw, with its 100K and GitHub stars, exemplifies the shift toward local-first, autonomous entities capable of orchestrating complex workflows. Yet, this autonomy introduces perils. Agents interfacing with external tools or networks risk exposing proprietary data, misallocating funds, or deviating from compliance protocols. Traditional guardrails, reliant on centralized monitoring or rule-based filters, falter under sophisticated attacks or internal errors. They demand trust in the enforcer, a fragile foundation in decentralized environments.

Reflecting on recent discourse, voices in the zkML community advocate for a paradigm grounded in mathematics. Zero-knowledge machine learning offers succinct verifiability: proofs that an agent adhered to a specific model or policy, sans revealing inputs. This is no mere theoretical elegance; it addresses real-world frailties, such as those highlighted in agentic commerce where transactions must align with regulatory strictures without broadcasting trade secrets.

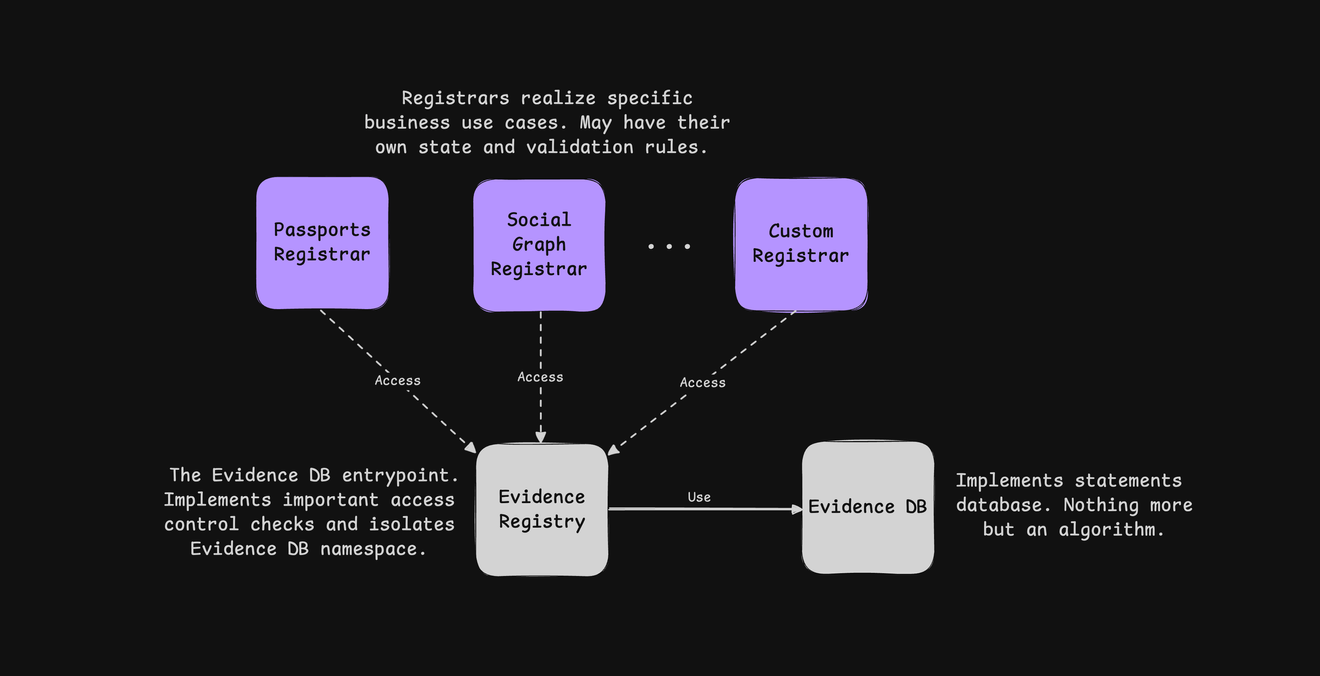

NovaNet ZKP: Architecting Collaborative zkML Infrastructure

At the heart of effective zkML guardrails lies NovaNet’s decentralized prover network, a symphony of cooperative computation that sidesteps the pitfalls of proof racing. By leveraging game-theoretic incentives, NovaNet fosters prover collaboration, slashing costs and bolstering privacy. Their zkFramework, modular and adaptable, integrates specialized provers tailored for machine learning workloads, enabling seamless zkML deployment.

This infrastructure proves instrumental for tamperproof AI. Imagine an AI agent processing healthcare queries: NovaNet ZKP generates proofs attesting that inferences drew from compliant models, without auditors glimpsing patient records. In finance, credit scoring models train on encrypted datasets, yielding verifiable outputs that regulators can trust implicitly. Such capabilities stem from converting data into ZKP-compatible circuits, allowing models to ingest proofs of validity rather than raw information.

NovaNet ZKP Key Features

-

Decentralized collaborative proving for efficiency, leveraging NovaNet’s prover network to optimize zkML computations through cooperative protocols.

-

Privacy-preserving model verification, enabling AI agents to cryptographically attest compliance without data exposure.

-

Tamperproof audit trails for compliance, generating verifiable proofs of regulatory adherence in sensitive sectors.

-

Modular integration with OpenClaw agents via zkFramework, facilitating seamless verifiable guardrails.

-

Game-theoretic cost reduction, incentivizing prover cooperation to minimize expenses and enhance privacy.

Deploying Verifiable Guardrails Against Data Exfiltration

Preventing data leaks demands more than detection; it requires preemptive, cryptographic enforcement. With NovaNet ZKP, developers embed zkML checks into agent pipelines. Prior to executing sensitive actions, an OpenClaw agent submits operations to a guardrail model. This model, encapsulated in a ZKP circuit, evaluates compliance: Does the query align with privacy policies? Are outputs free of embedded secrets? A succinct proof emerges, validatable on-chain or by any stakeholder.

This approach shines in multi-agent systems, where orchestration amplifies leak vectors. By mandating proofs for inter-agent communications, NovaNet ensures data flows remain opaque yet auditable. Opinionated as it may sound, this shifts agency from brittle heuristics to immutable math, fostering trust in autonomous commerce. Early adopters report not just leak prevention, but enhanced scalability, as proofs compress verification overhead dramatically.

Yet, the true measure of these zkML guardrails lies in their practical integration, where theory meets the gritty realities of agent deployment. Developers interfacing OpenClaw with NovaNet ZKP find themselves equipped with tools that transform vulnerability into virtue. A guardrail circuit, once compiled, becomes an unyielding sentinel, scrutinizing every intent before action unfolds.

Crafting zkML Circuits for OpenClaw Compliance

Envision embedding a zkML verifier directly into an agent’s decision loop. NovaNet’s zkFramework simplifies this by providing pre-built circuits for common leak vectors: PII detection, fund transfer authorization, and policy adherence. The process unfolds methodically: first, define the guardrail model using lightweight ML frameworks compatible with ZK constraints; second, compile to arithmetic circuits via tools like those in NovaNet’s ecosystem; third, deploy on the collaborative prover network for on-demand proof generation. This yields a proof that not only attests to correct execution but also scales with agent autonomy, unburdened by centralized oversight.

Reflecting on the broader implications, such circuits address the opacity plaguing agentic systems. Where traditional logging might capture snapshots, zkML proofs offer holistic verifiability. An auditor verifies that an OpenClaw agent processed a transaction against a credit model without ever accessing the underlying financial data, a feat impossible with heuristic checks alone. This resonates deeply in sectors demanding ironclad privacy, from decentralized finance protocols to sovereign data enclaves.

NovaNet ZKP extends its guardianship to unruggable architectures, where smart contracts enforce agent behavior sans human intervention. In agentic commerce, proofs confirm that transactions align with approved models, mitigating risks of misused funds or rogue actions. This game-theoretic prover cooperation underpins efficiency: provers collaborate rather than compete, distributing load and preserving confidentiality through threshold schemes. The result? Tamperproof AI that thrives in adversarial settings, where trust is not assumed but proven. Consider multi-agent orchestration, a frontier where OpenClaw-like systems shine. Here, zkML guardrails interlock, each agent furnishing proofs for its segment of the workflow. Data remains siloed, leaks forestalled by design. Early implementations, drawing from ICME’s succinct verifiability and community experiments, demonstrate proofs verifying under seconds, even for intricate models. This efficiency beckons a future where AI agents roam freely, tethered only by cryptographic leashes. Opinionated observers might decry the computational overhead of ZKPs, yet NovaNet’s innovations render it negligible. Collaborative proving slashes latency, while modular frameworks adapt to evolving threats. In healthcare, agents analyze anonymized scans, proving diagnostic fidelity without exposing records. Finance benefits similarly: models forecast yields on private datasets, outputs certified for institutional audits. This privacy-preserving training paradigm, where proofs supplant raw data, heralds a renaissance in compliant AI. As autonomous agents proliferate, the imperative for NovaNet ZKP guardrails crystallizes. They do not merely prevent leaks; they architect trust at scale, enabling agents to wield power responsibly. Developers, researchers, and stewards of data alike stand to gain from this mathematical bulwark, ensuring that innovation proceeds not at privacy’s expense, but in concert with it. The trajectory points unmistakably toward verifiable autonomy, where every action whispers its own proof of propriety. Traditional vs zkML Guardrails Comparison

Method

Privacy

Verifiability

Scalability

Cost

Traditional Guardrails

Low 🔓

Trust-based ❌

Limited 📉

High 💸

zkML (NovaNet)

High 🔒🔒

Cryptographic ✅✅

High 📈

Optimized 📉💰