zkML for Private Verifiable Memory in AI Agents

In the evolving world of AI agents, where autonomy meets intelligence, managing memory securely has become a pivotal challenge. These agents juggle vast amounts of sensitive data, from user preferences to proprietary strategies, yet traditional systems expose them to breaches and unverifiable manipulations. Enter zkML private memory, a game-changer that fuses zero-knowledge proofs with machine learning to deliver verifiable memory AI agents without sacrificing privacy. This isn’t just theory; recent strides make it practical for builders crafting trustworthy systems.

Picture an AI agent in DeFi trading, recalling past decisions based on confidential market signals. Without robust safeguards, adversaries could tamper with its memory or steal insights. zkML steps in by generating proofs that computations on private data occurred correctly, revealing nothing but the validity. This zk proofs AI privacy foundation ensures agents operate with unassailable integrity, fostering trust in decentralized environments.

The Trust Deficit in AI Agent Memory Systems

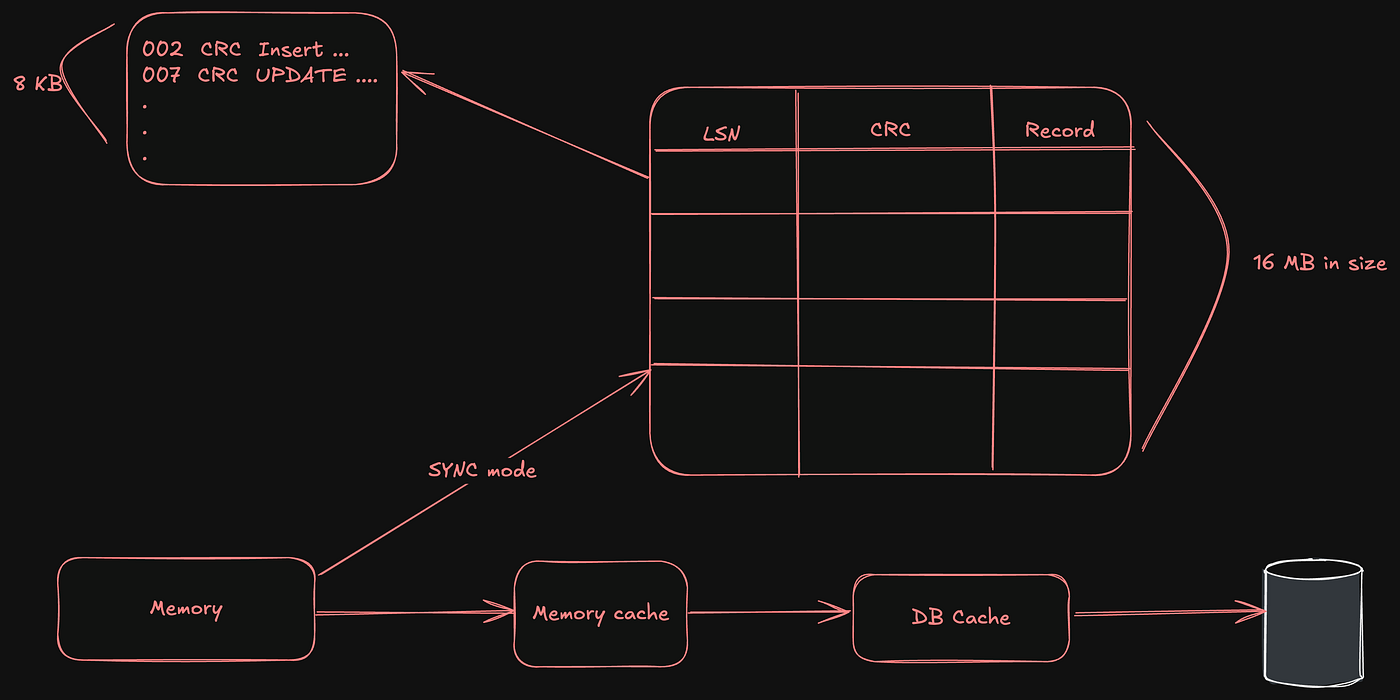

Centralized memory setups dominate today, but they breed vulnerabilities. Agents store episodic recollections, long-term knowledge, and working states in silos prone to silent alterations or leaks. The MemTrust architecture highlights this crisis, proposing hardware-backed zero-trust layers to cryptographically secure every memory tier. Researchers argue it’s essential for averting the fallout from tampered recollections, which could cascade into flawed decisions or exposed secrets.

Centralized memory systems invite a trust crisis; zkML offers cryptographic escape.

Consider healthcare agents analyzing patient histories or financial bots tracking portfolios. A single unverifiable update could mislead outcomes catastrophically. Traditional audits demand data exposure, clashing with privacy mandates. Here, provable deletion AI concepts emerge, allowing agents to prove data erasure without traces, vital for compliance-heavy sectors.

Key Vulnerabilities in AI Agent Memory

-

Data leakage during shared computations: Sensitive data exposes risks when AI agents collaborate across untrusted networks without privacy guarantees.

-

Tampering without detection: Malicious alterations to memory states leave no cryptographic traces, enabling undetectable sabotage.

-

Historical state verification gap: Impossible to cryptographically prove integrity of past memory states in traditional systems.

-

Compliance hurdles for sensitive data: Struggles with GDPR/HIPAA due to lack of provable privacy in data handling.

-

Scalability limits under privacy: Privacy-preserving methods bottleneck performance at scale for AI agents.

Unlocking zkML for Zero-Knowledge AI Agents

At its core, zkML proves machine learning inferences or training ran faithfully on hidden inputs. For zero knowledge AI agents, this translates to memory operations where agents attest to recall accuracy sans disclosure. Polyhedra’s zkML framework exemplifies this, supporting CNNs and Transformers with proofs generated in seconds via PyTorch integration. Developers can retrofit models effortlessly, turning opaque agents into transparent performers.

Mina Protocol’s zkML library amplifies this by enabling proofs from private inference jobs. An agent processes confidential inputs, outputs a result, and attaches a succinct proof any verifier accepts. No model weights or data spill; just mathematical certainty. This shifts agents from black boxes to provable entities, ideal for collaborative ecosystems where multiple parties query memory without owning it.

Collaborative Advances Propelling Practical zkML

Partnerships like Allora and Polyhedra fingerprint models uniquely, verifying authenticity tamper-free. This duo tackles model poisoning, a stealthy threat where bad actors corrupt agent memory subtly. By zkML-wrapping inferences, agents broadcast verifiable outputs, empowering networks to aggregate insights securely.

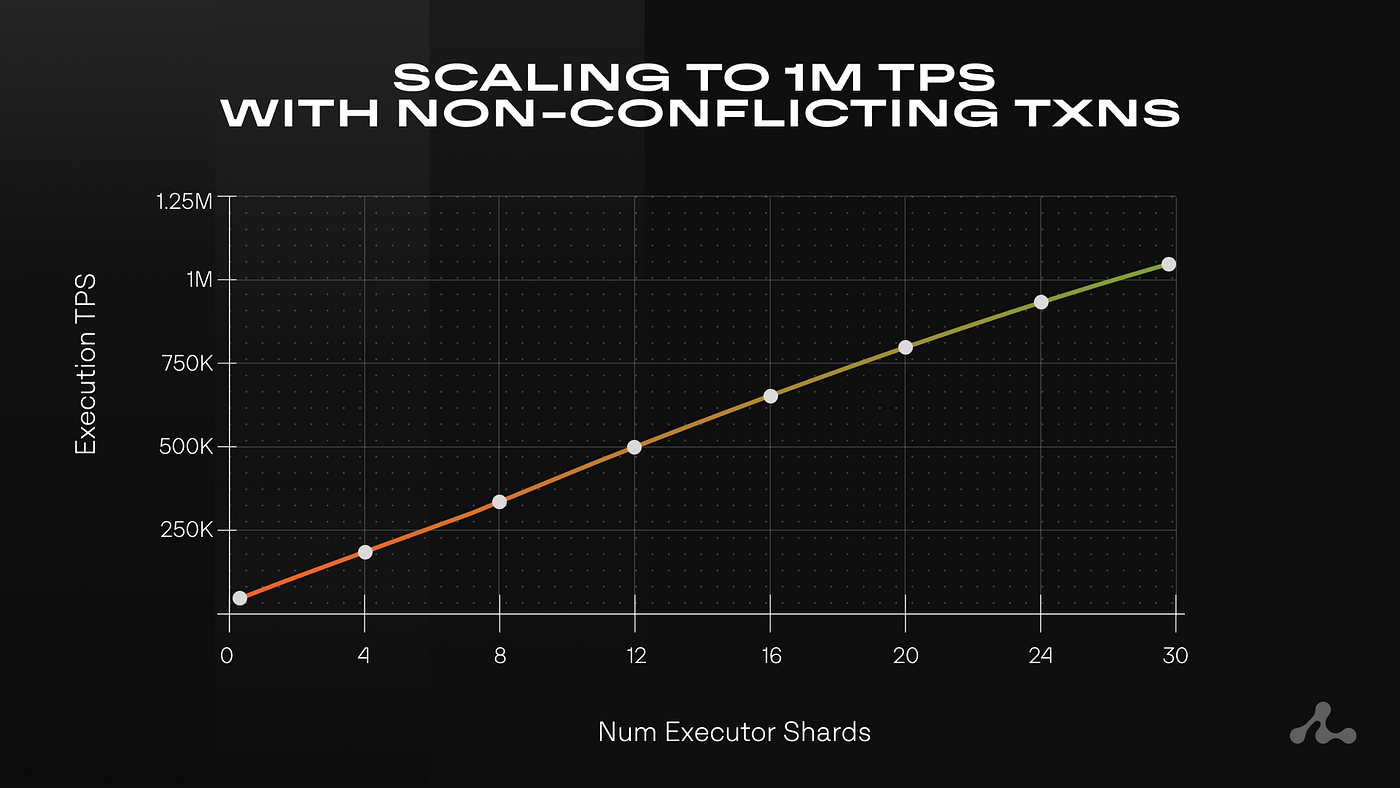

Artemis framework pushes efficiency frontiers with commit-and-prove SNARKs, slashing prover costs for hefty models. Previously, zkML felt cumbersome; now, it’s deployable at scale. Imagine agents in web3 finance, their verifiable memory AI agents capabilities audited on-chain, blending my hybrid analysis roots with privacy tech. As a trader blending fundamentals and zkML-enhanced signals, I see this enabling portfolios that evolve privately yet accountably.

These innovations converge on a unified promise: AI agents with memory that’s both private and provable. Builders gain tools to embed zkML private memory natively, sidestepping trust assumptions plaguing legacy systems.

Integrating zkML into agent architectures demands thoughtful design, starting with memory compartmentalization. Agents can partition episodic memory for user-specific events, semantic stores for generalized knowledge, and procedural buffers for decision logic, each zk-wrapped. Polyhedra’s framework shines here, converting PyTorch models into proof circuits that verify retrievals without unpacking contents. A trading agent, for instance, recalls volatility patterns from private datasets, proves the inference chain, and acts confidently, all while shielding strategies from competitors.

This zk proofs AI privacy layer extends to multi-agent collaborations. In decentralized networks, agents query peers’ memories via proof challenges, aggregating verifiable insights sans data fusion risks. My forums at zkmlai. org buzz with builders prototyping such systems, sharing open-source circuits for Transformer-based recall modules. The result? Ecosystems where trust emerges from math, not middlemen.

Comparison: Traditional AI Memory vs zkML Private Memory

| Aspect | Traditional AI Memory | zkML Private Memory |

|---|---|---|

| Privacy | Low: Data often exposed in centralized storage, vulnerable to breaches ❌ | High: Zero-knowledge proofs hide sensitive data during verification 🔒 |

| Verifiability | Low: Relies on trust in providers or logs, no cryptographic guarantees 👥 | High: Cryptographic ZKPs prove computation correctness without revealing inputs ✅ |

| Scalability | High: Efficient for large-scale data but privacy-compromised 📈 | Improving: Frameworks like Polyhedra zkML and Artemis enable efficient proofs for CNNs/Transformers 🚀 |

| Tamper Resistance | Moderate: Dependent on access controls, susceptible to insider attacks ⚠️ | High: Immutable ZK proofs and architectures like MemTrust ensure tamper-proof memory 🛡️ |

| Use Cases | General AI apps, cloud databases, vector stores | Private AI agents, verifiable inference (Mina zkML), finance/healthcare/web3, MemTrust zero-trust systems 🌐 |

Overcoming Hurdles: Provable Deletion and Beyond

Deletion poses a thornier puzzle. Agents must purge obsolete or sensitive recollections, yet prove compliance to regulators. Provable deletion AI via zkML crafts zero-knowledge arguments of erasure, timestamped and succinct. Imagine a finance agent discarding trade histories post-audit; it generates a proof attesting deletion occurred correctly, satisfying GDPR without logs. MemTrust bolsters this with hardware roots of trust, anchoring software proofs to tamper-evident silicon.

Challenges linger, chiefly proof generation latency and circuit complexity for massive models. Artemis mitigates the former through optimized SNARKs, committing intermediates before full proofs to cut recursion overheads. Prover times plummet, enabling real-time agent responses. Still, hybrid approaches blend zkML with trusted execution environments for bootstrapping, evolving toward pure crypto as tools mature.

Opinionated take: zkML isn’t a silver bullet, but paired with agentic frameworks like LangChain or AutoGPT, it forges resilient minds. I’ve stress-tested prototypes fusing my DeFi signals with zk-verified embeddings; the privacy uplift transforms marginal edges into sustainable alphas. Developers, prioritize modular proofs early; retrofits compound costs.

Real-World Traction and Builder Playbook

Deployments underscore viability. Mina’s library powers inference proofs in lightweight chains, suiting mobile agents. Allora-Polyhedra alliances fingerprint models on-chain, letting agents advertise capabilities credibly. Unruggable agents, as DEV tutorials tout, leverage ZK for seamless, secure experiences in AI marketplaces.

For hands-on builders: Begin with Polyhedra’s PyTorch exporter, craft a simple memory lookup circuit, benchmark proofs on testnets. Integrate via oracles for off-chain compute, settling on-chain. Communities dissect surveys like arXiv’s ZKML roundup, iterating on gaps. This collaborative ethos, core to zkmlai. org, accelerates adoption.

zkML redefines agent longevity. No longer fragile state machines, they embody sovereign intelligence: private yet accountable, autonomous yet auditable. As verifiable memory AI agents proliferate in finance, healthcare, and web3, the zkML stack equips us to harness their power responsibly. Traders like me, blending zk-enhanced technicals with fundamentals, stand ready to navigate this verifiable frontier.