In the evolving landscape of decentralized finance and AI, zkML Ethereum deployments stand out as a conservative yet transformative approach to private neural network inference. By leveraging zero-knowledge proofs, developers can execute machine learning models on-chain without exposing sensitive inputs, ensuring verifiable AI inference blockchain operations that prioritize data sovereignty. This fusion addresses longstanding concerns in financial modeling, where proprietary datasets demand ironclad privacy.

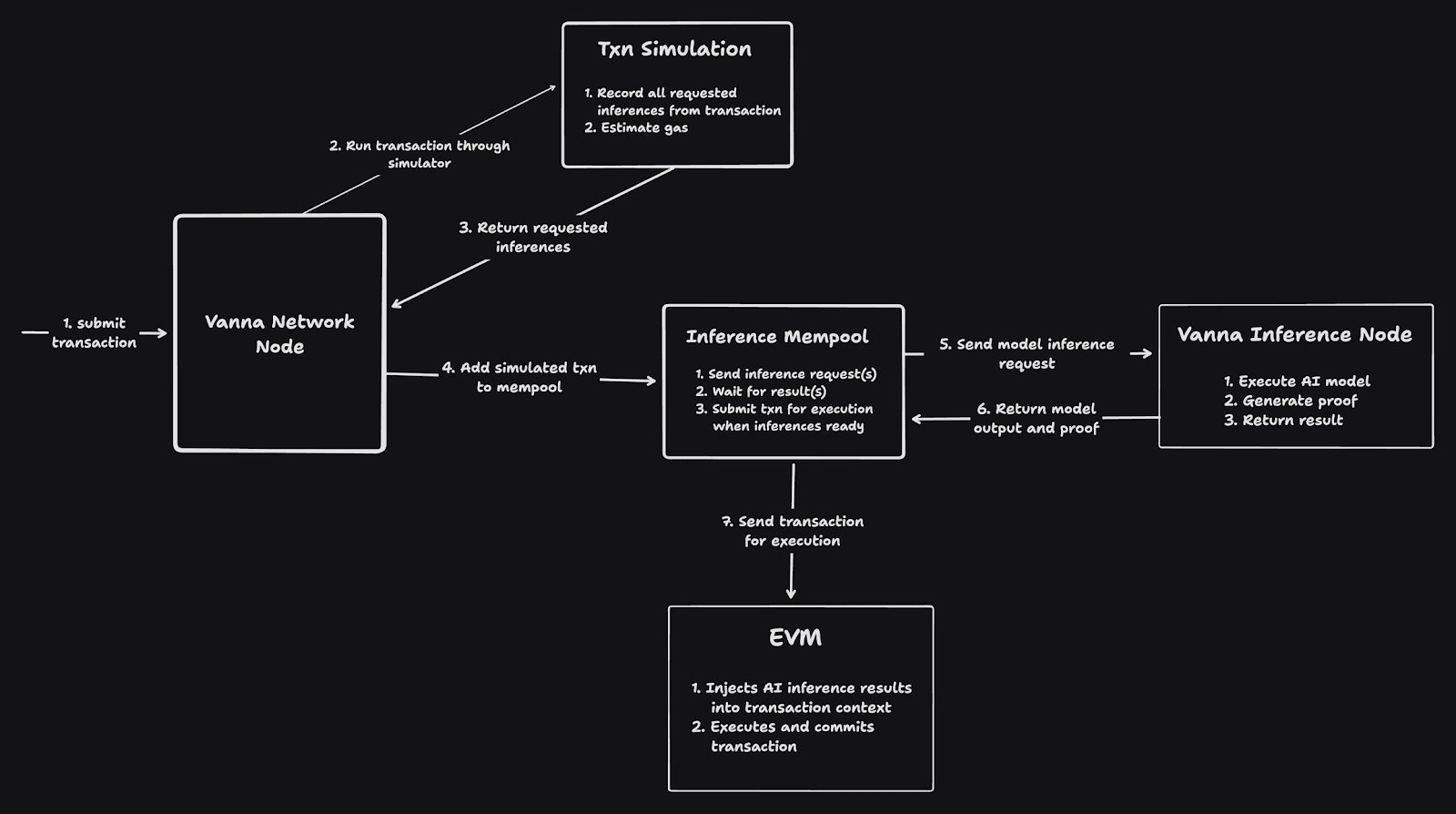

Traditional neural networks falter in public blockchains due to transparency requirements; every computation lays bare. zkML Ethereum changes this calculus. Projects like 'Proofs of Inference' pioneer decentralized marketplaces where users request private inferences, model providers generate zk-SNARK circuits, and proofs anchor on Ethereum with storage via Filecoin. Such systems compress verification, slashing gas costs while upholding integrity.

Core Mechanics of ZK Proofs in Neural Network Inference

At its heart, zkML Ethereum hinges on arithmetic circuits tailored for neural operations. Matrix multiplications and activations, once prohibitive in proof systems, now yield succinct proofs via libraries like those from Mina or open-source ZKML frameworks. Consider a feedforward network: inputs remain private, weights may be public or hashed, and the proof attests correct output computation.

ZKML facilitates on-chain model deployment in decentralized networks, compressing verification through zero-knowledge proofs.

This matters profoundly for verifiable AI inference blockchain applications. In portfolio optimization, for instance, a neural net processes confidential holdings against market signals, outputting allocation advice proven correct sans disclosure. Conservative strategists appreciate this: no trust in oracles or custodians, just cryptographic certainty.

Real-World Ethereum Projects Driving zkML Adoption

The 'Proofs of Inference' initiative exemplifies practical zkML Ethereum utility. It crafts a marketplace for inferences: requesters post jobs, provers compute off-chain with private data, submit Ethereum-verified proofs, and settle payments trustlessly. Coupled with Filecoin for model artifacts, it scales without central chokepoints.

Parallel efforts like 'ZKAudit' extend this to model auditing. Using ZKML libraries, they prove tensor graph executions, verifying training fidelity on-chain. This underpins data marketplaces where sellers attest computations without revealing datasets, vital for financial AI where ZK proofs neural networks Ethereum integration fortifies against adversarial inputs.

These advancements, rooted in 0xPARC tutorials and ChainScore Labs guides, demystify circuit design for convolutions and embeddings. Yet, challenges persist: proof generation latency demands optimization, and Ethereum's gas limits favor recursive proofs or layer-2 rollups. From a value investing lens, early zkML Ethereum protocols offer asymmetric upside, blending AI potency with blockchain verifiability.

Building Blocks: Converting ML Models to ZK Circuits

Transitioning neural networks to zkML Ethereum requires methodical decomposition. Begin with model quantization to fixed-point arithmetic, amenable to SNARK constraints. Tools from GitHub's awesome-zero-knowledge repos, including RISC0 implementations, automate much of this, outputting circuits for Ethereum-compatible verifiers.

For private neural network inference zkML, witness generation off-chain preserves efficiency; only the 200-byte proof hits L1. Binance deep dives highlight custom gates for ML ops, ensuring non-linearities like ReLUs fit finite fields without approximation pitfalls. Opinion: while exuberant hype swirls, zkML's conservative appeal lies in its mathematical rigor, sidestepping probabilistic failures plaguing other privacy tech.

Quantization curbs precision loss, a trade-off conservative practitioners accept for proof feasibility. Once circuit-ready, compilation yields a proving key and verification key, deployed as Ethereum contracts. This process, detailed in ICME's definitive guide and Kudelski Security analyses, underscores zkML Ethereum's maturity for production use.

Ethereum-Specific Deployment Strategies

Deploying to Ethereum demands Layer 1 optimization or Layer 2 scaling. Succinct zk-SNARKs shine here: a proof's fixed size sidesteps calldata bloat, enabling verifiable AI inference blockchain at sub-cent costs via rollups like Optimism. Mina Protocol's zkML library offers recursion primitives, aggregating inferences into single on-chain attestations. For financial workflows, this means batch-proving portfolio predictions, settling disputes with immutable proofs.

In practice, integrate with EIP-4844 blobs for cheaper storage, as 'Proofs of Inference' demonstrates. Provers stake collateral against proof validity, slashed on failure, aligning incentives conservatively. This mirrors value investing principles: downside protection via bonds-like mechanisms in code.

Gas profiling reveals bottlenecks; matrix ops dominate cycles. Mitigate with lookup arguments from 0xPARC frameworks, slashing times 10x. Vid Kersic's demystification on Medium aptly notes: zkML swims through computational concrete, yet Ethereum's EVM evolution paves smoother paths.

Consider alpha generation: a neural network ingests proprietary trade data, infers signals privately, proves output against public benchmarks. Deployed via zkML Ethereum, it powers automated market makers with verified edges, no data leaks. QuillAudits highlights real-world apps like this, where proofs validate inference sans model exposure.

In bonds pricing, convolutional layers model yield curves from confidential issuer data. ZK proofs neural networks Ethereum setups attest accuracy, enabling on-chain swaps without oracle risks. From my CFA vantage, this fortifies fundamental analysis: zkML Ethereum embeds privacy in discounted cash flows, resilient to black swan data poisons.

Running inference in zero-knowledge is arduous, but yields three pillars: privacy, verifiability, scalability for decentralized AI.

ChainScore Labs' walkthroughs equip devs for neural circuit design, from embeddings to attention heads. GitHub's awesome-zero-knowledge curates RISC0 zkVM ports, easing Ethereum integration. Binance deep dives affirm: custom ML gates unlock non-linear proofs without field hacks.

Challenges linger, conservatively assessed. Latency hovers at minutes for large nets, favoring hybrid prover networks. Ethereum Danksharding will alleviate, but Layer 2 dominance seems prudent. Overhype risks abound; focus on circuits proving tangible value, like 'ZKAudit''s tensor verifications for data markets.

Horace Pan's tutorials via 0xPARC repo provide battle-tested demos, from toy classifiers to Ethereum-ready provers. This ecosystem, maturing since 2025 ICME forecasts, positions zkML Ethereum as a bulwark for private neural network inference zkML. Investors eye protocols tokenizing proof markets; select those with audited circuits and deflationary economics, echoing timeless value tenets.

Ultimately, zkML Ethereum redefines AI in finance: cryptographic primitives supplant fragile trust, yielding stable, verifiable intelligence. As deployments proliferate, from inference marketplaces to audited models, the blockchain cements privacy as infrastructure, rewarding patient builders with enduring edges.

No comments yet. Be the first to share your thoughts!