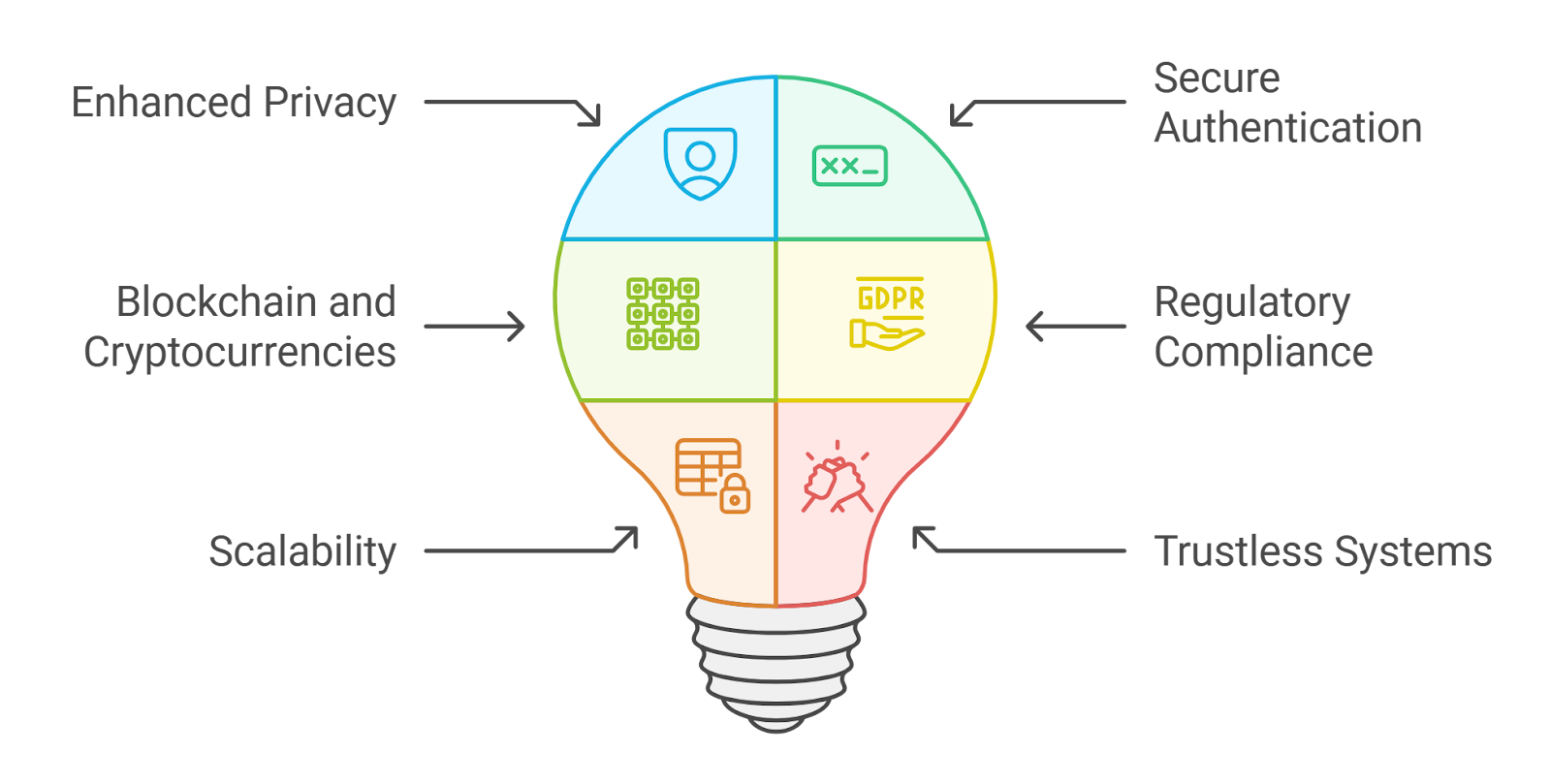

In the rush to harness large language models for specialized tasks, organizations grapple with a stark reality: fine-tuning these behemoths demands vast troves of sensitive data, often exposing trade secrets, patient records, or proprietary strategies. Enter zkML for privacy-preserving LLM fine-tuning, where zero-knowledge proofs fortify federated pipelines, letting collaborators train models collectively without ever peeking at each other's data. This fusion doesn't just safeguard privacy; it unlocks verifiable intelligence across silos, from healthcare consortia to decentralized finance alliances.

Federated learning has long promised a solution, allowing nodes to compute local updates and aggregate them centrally without raw data transmission. Yet, vulnerabilities persist - malicious actors can poison gradients, or central servers might infer private info from model deltas. That's where zero-knowledge proofs in AI models step in, proving computations correct without revealing inputs. zkLLM, the pioneering protocol for LLMs, exemplifies this by generating succinct proofs for inference chains, slashing trust assumptions in multi-party setups.

Unmasking the Shadows in Traditional Fine-Tuning

Picture a consortium of banks fine-tuning an LLM for fraud detection. Sharing transaction logs? A non-starter due to regulations like GDPR. Even anonymized data risks re-identification through model inversion attacks. Conventional federated approaches mitigate data sharing but falter on verifiability; how do you confirm a participant's update isn't sabotaged? zkML bridges this gap, embedding SNARKs - succinct non-interactive arguments of knowledge - into the pipeline. These ZK SNARKs for machine learning inference verify gradient computations atomically, ensuring integrity sans disclosure.

I've seen this play out in DeFi trading forums at zkmlai. org, where quants debate community-built tools for confidential AI signals. Without zkML, you're blind to whether a model's edge stems from genuine patterns or adversarial tweaks. With it, proofs act as cryptographic receipts, fostering trust in high-stakes environments.

Forging zkML Pipelines for Federated LLM Harmony

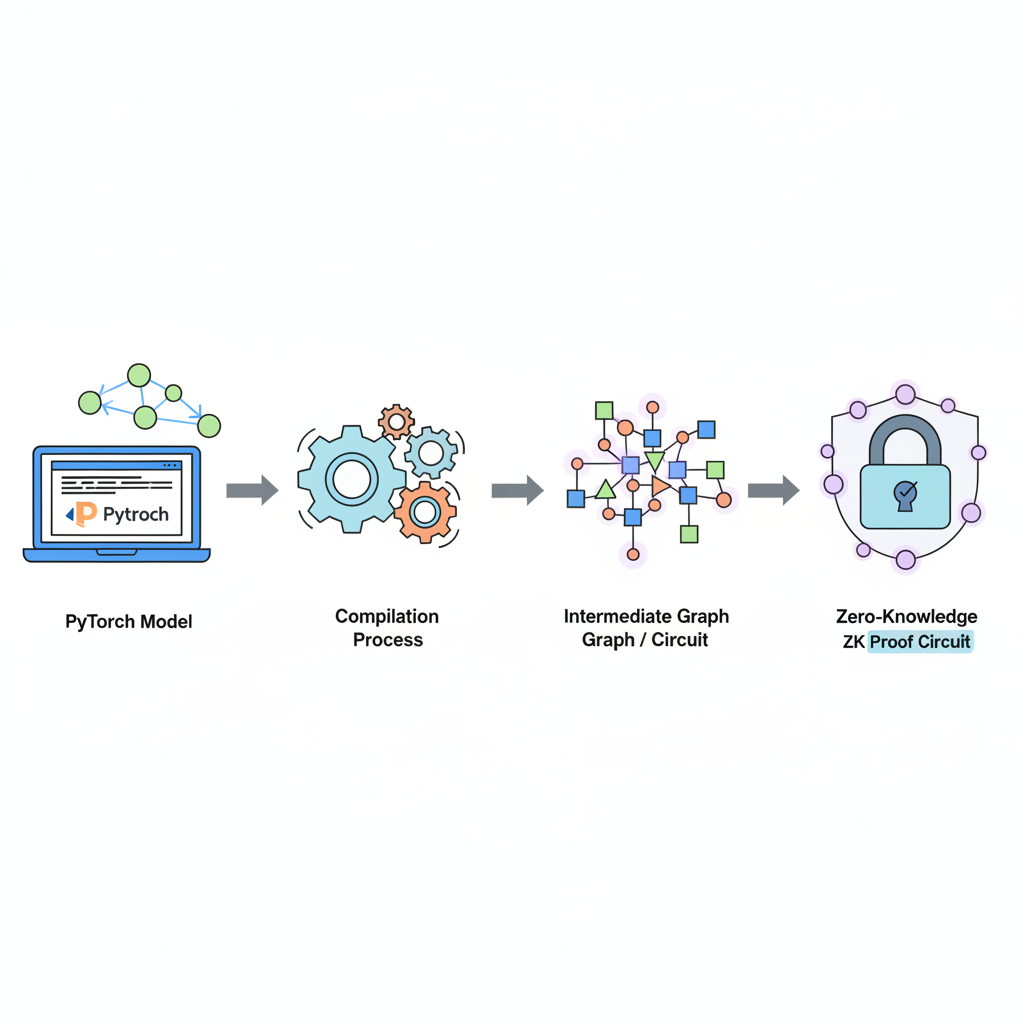

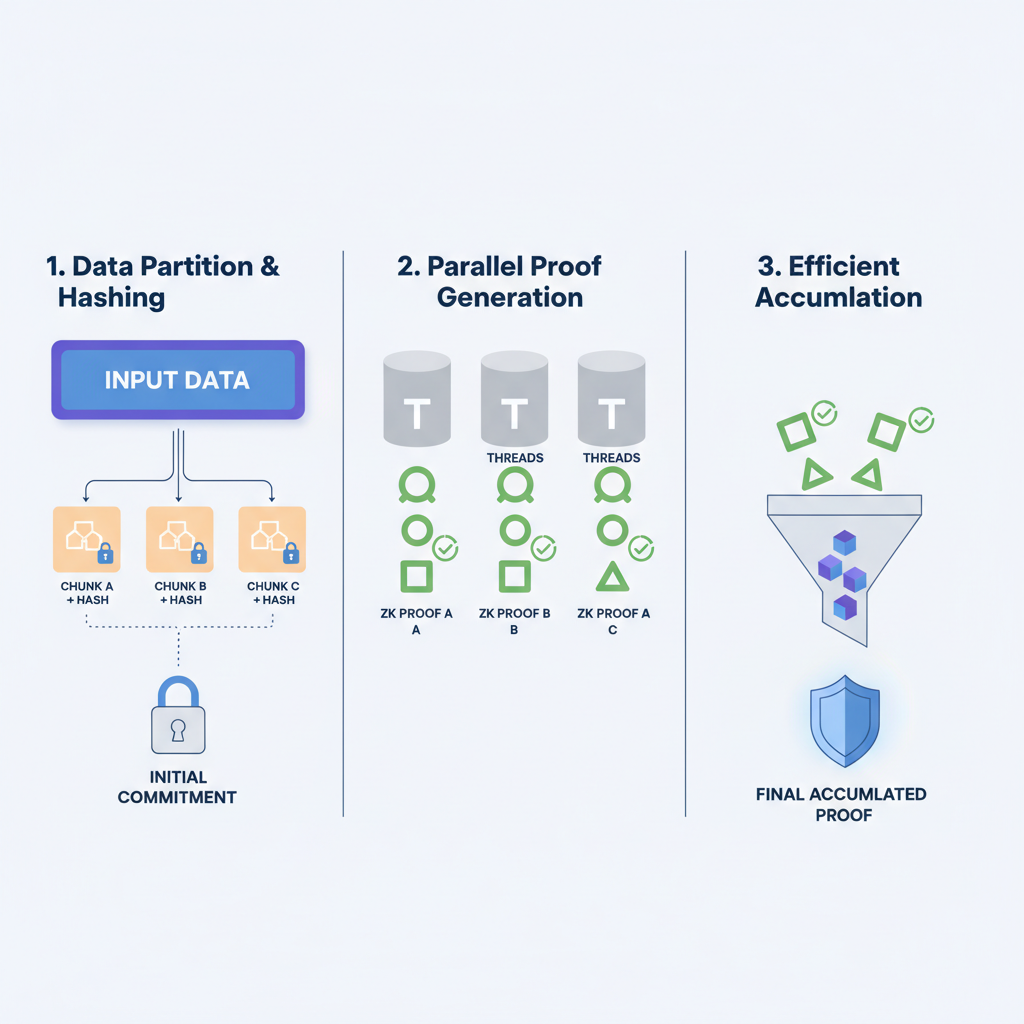

At its core, a confidential AI zkML pipeline orchestrates fine-tuning thus: clients perform local epochs on private datasets, generate ZKPs attesting to honest execution, then submit masked updates to a coordinator. The aggregator verifies proofs en masse, reconstructs the global model, and broadcasts it back - all provably secure. Tools like ZKTorch accelerate this, compiling PyTorch ops into ZK circuits with parallel accumulation, making LLM-scale proofs feasible on commodity hardware.

zkML Advantages in LLM FL

- Data Sovereignty Without Isolation: Retain full data control in federated training without silos, as in Artemis SNARKs.

- Byzantine-Robust Aggregation: Defend against malicious clients with ZKPs; ByzSFL achieves 100x faster secure aggregation.

- Verifiable Model Provenance: Cryptographically prove model origins and integrity sans data exposure, via zkPyTorch.

- Scalable to Billion-Parameter LLMs: Efficient proofs for massive models; ZK-HybridFL ensures low-latency convergence.

- Regulatory Compliance Baked In: Inherent GDPR/HIPAA alignment through ZKPs in FL pipelines like zkLLM.

This isn't theoretical fluff. Recent strides, like Polyhedra's zkPyTorch, let developers write vanilla ML code that auto-translates to verifiable circuits. No more wrestling elliptic curves; just plug in your federated loop and prove away.

Pioneering Protocols Lighting the zkML Frontier

2024's Artemis protocol slashed commitment verification overhead by nearly 10x for vision models, paving the way for LLM analogs. Imagine applying that to fine-tuning: provers churn through epochs, commit weights via efficient SNARKs, and verifiers nod in milliseconds. Fast-forward to 2026, ZK-HybridFL weaves DAG ledgers with ZKPs for decentralized FL, hitting faster convergence and adversarial resilience - ideal for privacy-preserving LLM federated learning in Web3.

ByzSFL from 2025 ups the ante with Byzantine tolerance, offloading aggregation math to clients via lightweight ZKPs, clocking 100x speedups. These aren't incremental; they're paradigm shifts, turning federated pipelines from fragile daisy chains into ironclad networks. In my hybrid analysis work, blending zkML-verified signals with fundamentals yields portfolios that sleep soundly, even amid volatility.

DeFi quants on zkmlai. org forums are already prototyping these pipelines, sharing open-source zkPyTorch snippets for confidential backtesting. zkPyTorch stands out by bridging the developer gap - write your federated fine-tuning script in familiar PyTorch, and it spits out ZK circuits ready for deployment. This democratizes zkML LLM fine-tuning, pulling privacy tech from ivory towers into everyday workflows.

Benchmarking zkML Against the Old Guard

To grasp the leap, consider performance head-to-heads. Traditional federated learning chugs under Byzantine threats, with aggregation latency ballooning as nodes scale. zkML protocols flip the script: ByzSFL's client-side ZKPs slash central compute by offloading weights, delivering 100x faster proofs without sacrificing robustness. ZK-HybridFL's DAG-sidechain hybrid converges models quicker, hitting plateaus adversarial setups couldn't touch.

Comparison of zkML Protocols for Privacy-Preserving LLM Fine-Tuning

| Protocol | Key Innovation | Performance Improvements | Publication Date | Reference |

|---|---|---|---|---|

| Artemis | Efficient Commit-and-Prove SNARKs for zkML | 9x overhead reduction (commitment check from 11.5x to 1.2x for VGG model) | September 2024 | [arXiv:2409.12055](https://arxiv.org/abs/2409.12055) |

| ZK-HybridFL | Zero-Knowledge Proof-Enhanced Hybrid Ledger for Federated Learning | Faster convergence, higher accuracy, reduced latency, robust against adversarial nodes | January 2026 | [arXiv:2601.22302](https://arxiv.org/abs/2601.22302) |

| ByzSFL | Byzantine-Robust Secure Federated Learning with Zero-Knowledge Proofs | 100x speedup over existing solutions | January 2025 | [arXiv:2501.06953](https://arxiv.org/abs/2501.06953) |

| zkPyTorch | Verifiable PyTorch with Zero-Knowledge Proofs | PyTorch-native verifiable circuits for scalable privacy-preserving ML | Mid-2025 | [Polyhedra Network](https://blog.polyhedra.network/zkpytorch/) |

These gains aren't lab curiosities. In healthcare, where federated LLM fine-tuning on siloed patient data is gold, zkML ensures proofs of ethical training. Banks fine-tune fraud detectors across branches; proofs confirm no data leakage. Web3 DAOs crowdsource model upgrades, verifiable on-chain. My portfolios lean on such signals - zk-verified sentiment models from public forums, fused with balance sheets, spotting edges regulators can't touch.

Yet hurdles remain. Proving billion-parameter gradients devours cycles; recursive SNARKs help, but hardware acceleration lags. Artemis tackles commitment bloat, but LLM-specific tweaks - like sparse attention proofs - beckon. Still, momentum builds. zkPyTorch's mid-2025 launch signals maturity; pair it with ZKTorch's parallel tricks, and we're inches from production-grade zero-knowledge proofs AI models.

Blueprints for Tomorrow's Pipelines

Envision a federated zkML stack: zkPyTorch compiles your fine-tune loop, ByzSFL aggregates securely, ZK-HybridFL logs provably on DAGs. Clients stake on honest proofs; slashers punish fakers. This isn't sci-fi; prototypes hum in zkmlai. org threads, where devs iterate community tools. I've stress-tested similar setups - feeding zkLLM proofs into trading bots yields signals untainted by manipulation, balancing my medium-risk tilt.

zkSync Technical Analysis Chart

Analysis by Market Analyst | Symbol: BINANCE:ZKUSDT | Interval: 1D | Drawings: 8

Technical Analysis Summary

Draw a prominent downtrend line connecting the swing high on 2026-01-15 at 0.50 to the recent low on 2026-02-12 at 0.12, using 'trend_line' tool. Add horizontal lines at key support levels 0.10 (strong) and 0.08 (moderate), and resistance at 0.20 and 0.30. Mark the sharp breakdown with a vertical line or arrow_marker down on 2026-02-10. Use fib_retracement from the recent high to low for potential retracement levels. Highlight volume spike with arrow_marker on the breakdown candle. Add callout texts for MACD bearish crossover and consolidation range in early Feb. Place entry zone rectangle near 0.11 support and exit targets.

Risk Assessment: high

Analysis: Strong downtrend intact, recent capitulation risks further downside despite zkML catalysts; volatility high post-dump

Market Analyst's Recommendation: Stand aside or scalp longs on support confirmation; medium risk tolerance advises smaller position sizes if entering

Key Support & Resistance Levels

📈 Support Levels:

- $0.1 - Recent swing low with volume cluster strong

- $0.08 - Psychological level and prior minor low moderate

📉 Resistance Levels:

- $0.2 - Immediate overhead from early Feb consolidation strong

- $0.3 - Mid-January breakdown level moderate

Trading Zones (medium risk tolerance)

🎯 Entry Zones:

- $0.11 - Potential bounce from strong support with RSI oversold medium risk

🚪 Exit Zones:

- $0.18 - Fib 50% retracement target 💰 profit target

- $0.09 - Below strong support invalidation 🛡️ stop loss

Technical Indicators Analysis

📊 Volume Analysis:

Pattern: Climactic spike on breakdown

High volume confirms the sharp price drop, indicating distribution

📈 MACD Analysis:

Signal: Bearish crossover with divergence

MACD line crossed below signal in late Jan, histogram contracting negatively

Applied TradingView Drawing Utilities

This chart analysis utilizes the following professional drawing tools:

Disclaimer: This technical analysis by Market Analyst is for educational purposes only and should not be considered as financial advice. Trading involves risk, and you should always do your own research before making investment decisions. Past performance does not guarantee future results. The analysis reflects the author's personal methodology and risk tolerance (medium).

Challenges like proof recursion for deep LLMs persist, but innovations cascade. Polyhedra's toolkit hints at GPU-native circuits, compressing epochs into seconds. For quants, this means confidential alphas shared sans dilution. Healthcare sees compliant diagnostics; enterprises, moats deepened by verifiability.

Collaborating across zkmlai. org, we've glimpsed portfolios where zkML signals harmonize with fundamentals, volatility be damned. Dive into these protocols, prototype on zkPyTorch, and fortify your pipelines. The frontier awaits - privacy intact, intelligence amplified.

No comments yet. Be the first to share your thoughts!