In the evolving landscape of artificial intelligence, where model outputs increasingly influence high-stakes decisions in finance and healthcare, the demand for verifiable computations has never been more pressing. Zero-knowledge machine learning, or zkML verifiable inference, emerges as a cornerstone technology, allowing users to confirm the correctness of AI inferences without revealing underlying data or model parameters. This privacy-preserving approach is particularly vital for conservative investors like myself, who prioritize data integrity in portfolio analysis amid rising concerns over AI opacity and deepfakes.

The Imperative for Privacy-Preserving AI Proofs

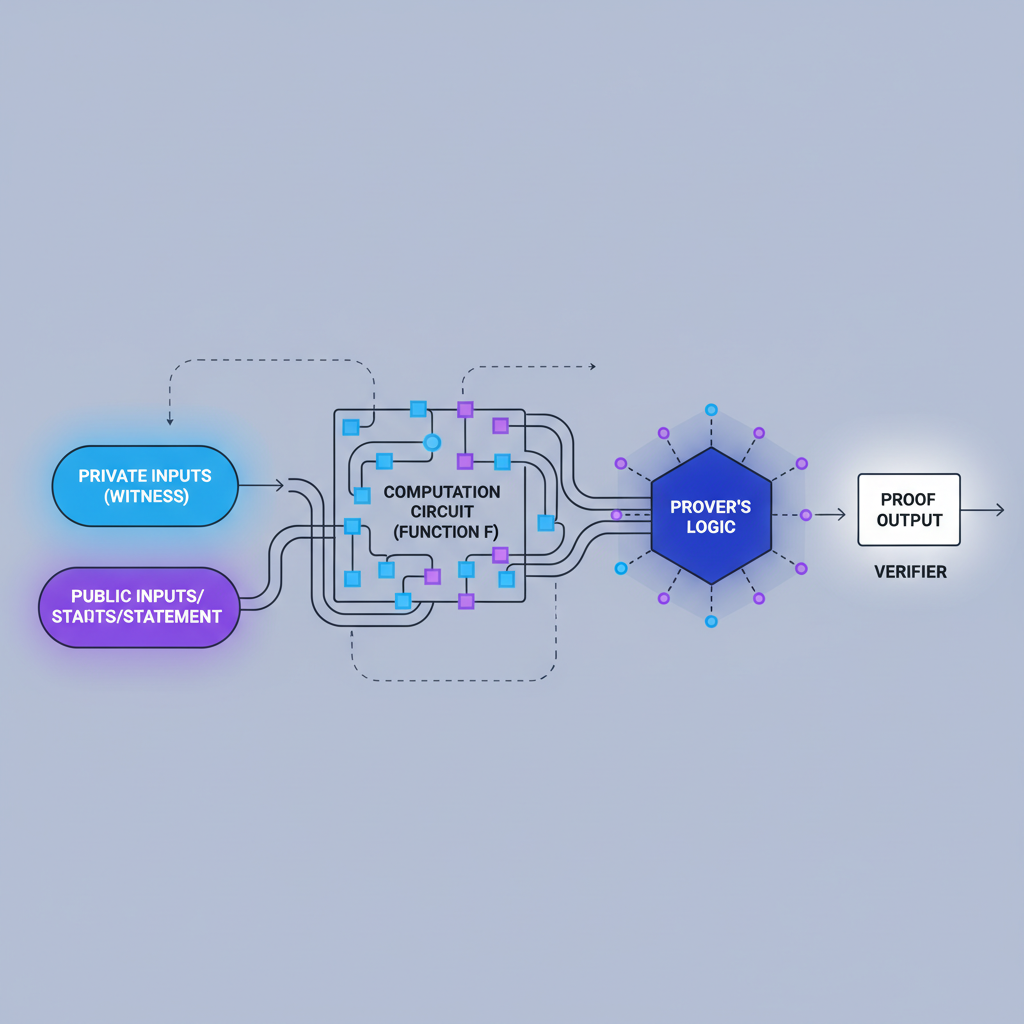

Traditional machine learning inference operates in a black-box manner, fostering distrust especially in decentralized environments. zkML addresses this by leveraging zero-knowledge proofs (ZKPs) to attest that an inference adhered strictly to a specified model and input, all while safeguarding sensitive information. Recent advancements, such as those highlighted in research from Kudelski Security and arXiv surveys, underscore the potential of frameworks like ExpProof for verifying model fairness under privacy constraints. For instance, improved protocols for decision trees enable privacy-preserving verifiable inference, mitigating risks in applications from fraud detection to credit scoring.

Inference Labs stands at the forefront of this shift, pioneering blockchain-based decentralized AI through tools that integrate seamlessly with standard ML workflows. Their media projections estimate a $10-15B market for verifiable inference by 2025, spanning finance, telecom, and robotics. This growth trajectory aligns with conservative strategies, where verifiable AI can underpin stable, long-term investment models without exposing proprietary datasets.

JSTprove: Streamlining ONNX to Verifiable Proofs

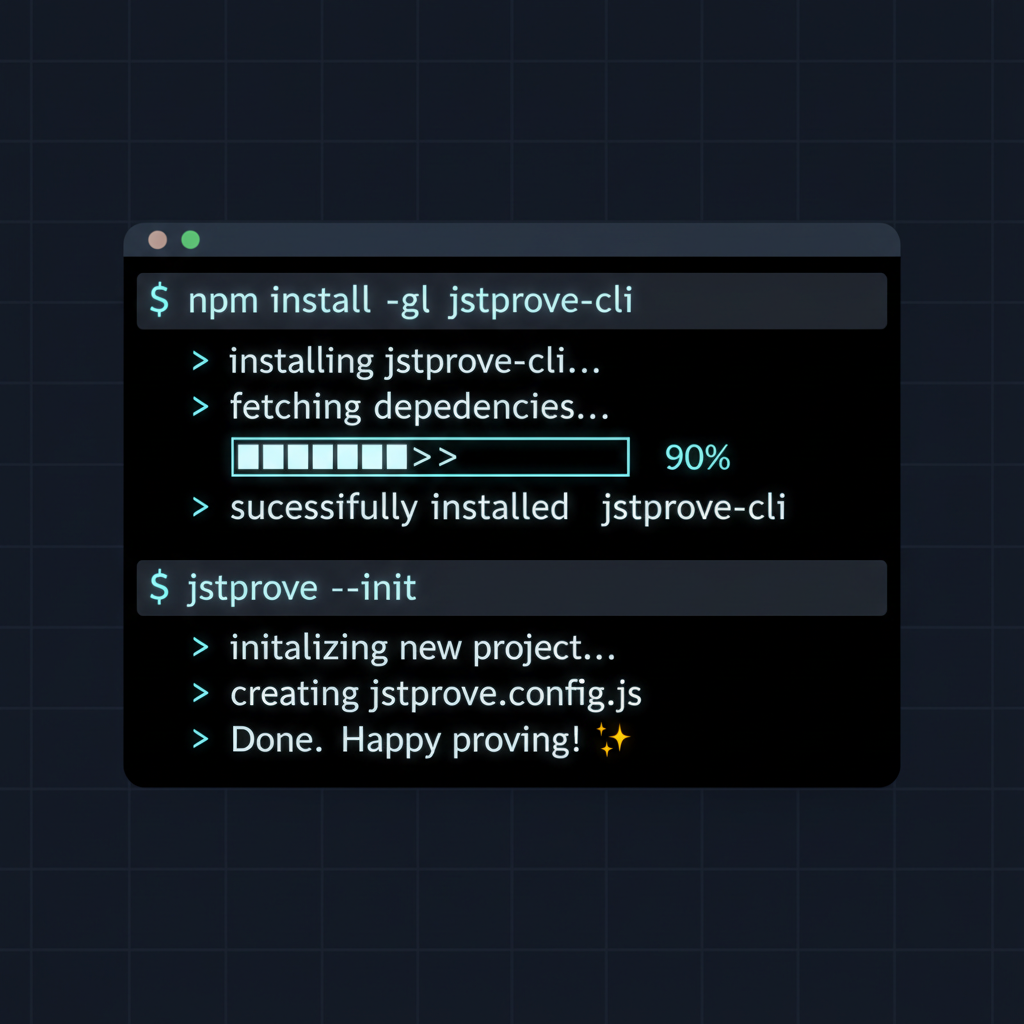

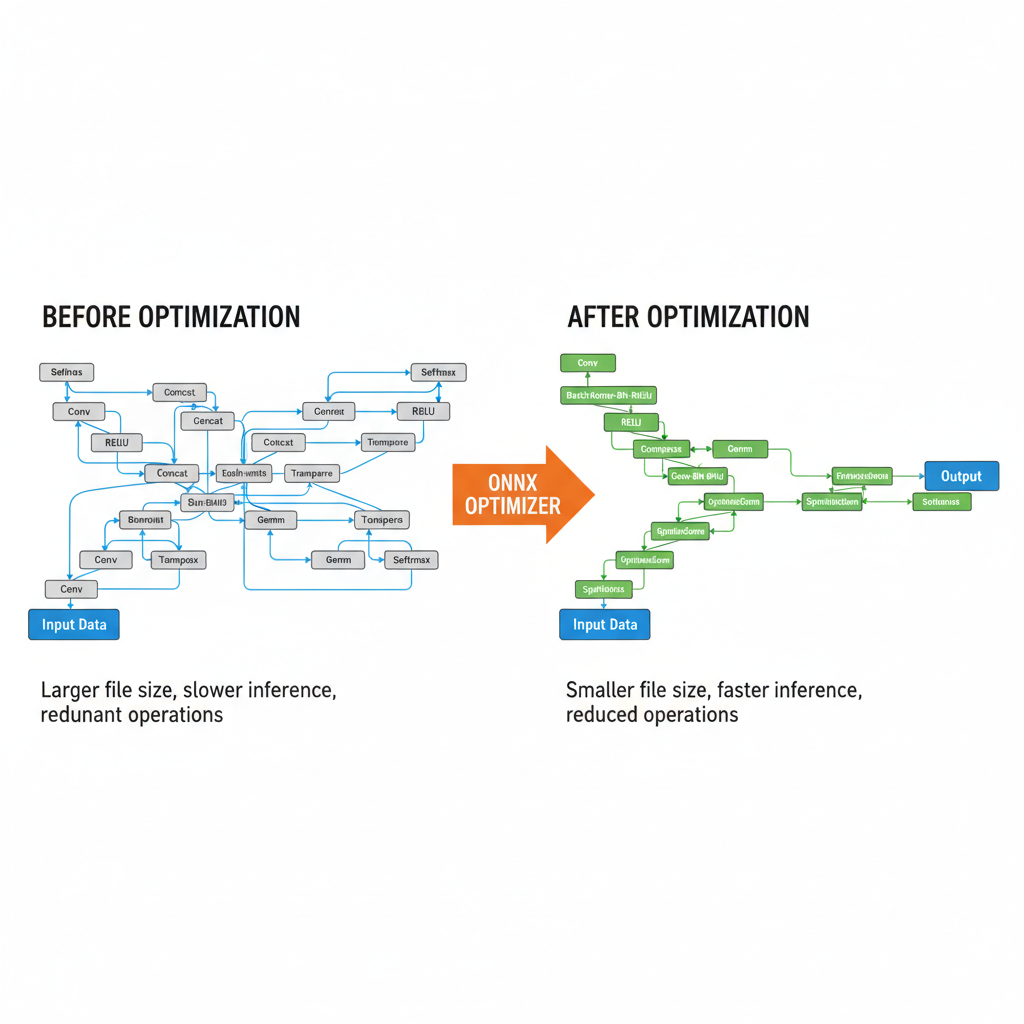

Inference Labs' JSTprove toolkit represents a pragmatic breakthrough in Inference Labs ONNX zkML integration. This command-line interface converts standard ONNX models into auditable ZK proofs, built atop Polyhedra Network's robust Expander backend. Developers can now generate proofs for complex neural networks with minimal friction, ensuring reproducibility and transparency in zkML pipelines. From my perspective as a portfolio manager, this tool's efficiency could revolutionize fundamental analysis, allowing zkML-enhanced models to process confidential financial data off-chain while verifying outcomes on-chain.

ZKML enables ML inference to be executed off-chain, and only proof of inferences can be verified on-chain.

This off-chain execution model, detailed in ScienceDirect overviews, minimizes gas costs and latency, making zkML viable for real-world deployment. Polyhedra's complementary zkPyTorch compiler further democratizes access, converting PyTorch models directly into ZK-compatible programs and amplifying JSTprove's reach.

Core Operations and Backend Synergies

JSTprove's strength lies in its support for essential ML primitives: convolutions, ReLU activations, max pooling, and fully connected layers. These cover the backbone of convolutional neural networks (CNNs), as seen in GitHub projects like pvCNN for privacy-preserving verification. Expander's backend optimizes proof generation, balancing speed and proof size - critical for conservative adoption where computational overhead must not erode returns.

SotaZK resources emphasize how such systems verify entire ML processes without parameter exposure, fostering trust in tamper-proof LLM models trained on legitimate data, per DEV Community insights. For financial applications, this means zkML can secure sentiment analysis from earnings calls or predict bond yields using proprietary indicators, all verifiable yet private.

Yet realizing this potential demands tools that bridge the gap between conventional ML pipelines and cryptographic rigor, without introducing undue complexity that could deter institutional adoption. Inference Labs' JSTprove excels here, offering a conservative entry point for zkML verifiable inference that aligns with the measured pace of value investing.

Implementing Verifiable Inference: A Conservative Roadmap

To integrate Inference Labs ONNX zkML into existing workflows, developers follow a structured path that emphasizes reproducibility and minimal overhead. This approach suits portfolio managers seeking to enhance models for alpha generation, where every computational step must be auditable yet shielded from competitors. JSTprove's CLI simplicity masks sophisticated optimizations, drawing from Expander's backend to handle proof aggregation efficiently across layers.

Once proofs are generated, verification becomes a lightweight on-chain operation, conserving resources in decentralized finance protocols. This off-chain inference paradigm, as outlined in on-chain zkML overviews, positions zkML as a stabilizing force against the volatility of unverified AI outputs. In my experience managing portfolios through market cycles, such verifiability could have preempted anomalies in algorithmic trading signals, preserving capital during downturns.

Consider a hypothetical bond yield predictor trained on proprietary macroeconomic indicators. Export the model to ONNX, process via JSTprove, and obtain a proof attesting to the inference's fidelity. Stakeholders verify this proof on-chain, confirming the yield forecast without accessing the model's weights or input data. This scenario exemplifies privacy preserving AI proofs, enabling collaborative DeFi applications where multiple funds contribute insights without mutual exposure.

Synergies with Polyhedra Ecosystem

Polyhedra Network's contributions amplify JSTprove's impact. Their zkPyTorch compiler streamlines model conversion from PyTorch, a staple in quantitative finance, into ZK circuits. This synergy reduces friction for data scientists transitioning to verifiable systems, supporting operations central to financial modeling like recurrent layers for time-series forecasting. Expander's role in proof recursion ensures scalability, vital as models grow in depth and parameters.

From a conservative standpoint, these integrations mitigate key risks: proof size inflation and prover latency. GitHub repositories like ZKML optimizing systems demonstrate tangible progress in compressing proofs for CNNs, making deployment feasible on resource-constrained blockchains. Inference Labs' zkml-blueprints repository further aids by providing circuit templates, allowing customization without reinventing primitives.

Broader implications extend to combating deepfakes in market intelligence. As LinkedIn analyses note, ZKPs render synthetic media verifiable or debunkable, protecting investors from manipulated earnings visuals or executive statements. In an era of proliferating LLMs, zkML ensures training data legitimacy, as DEV Community pieces advocate for tamper-proof models.

Verifiable inference using ZKPs enables trustless, private AI across industries like finance, telecom, and robotics.

This trustlessness underpins decentralized AI marketplaces, where models from Inference Labs compete on proof quality rather than opaque claims. For value investors, the appeal lies in long-term stability: zkML fortifies fundamental analysis against adversarial inputs, aligning with data-driven prudence over speculative hype.

Navigating Adoption Barriers Conservatively

Despite promise, zkML faces hurdles that warrant scrutiny. Proving large models remains computationally intensive, though Expander optimizations narrow the gap. Conservative adopters should prioritize hybrid approaches, verifying critical inference paths while offloading non-sensitive computations. Inference Labs' focus on standard ONNX mitigates retraining costs, a boon for legacy financial models.

Regulatory tailwinds favor zkML, with privacy mandates in finance demanding auditable AI. Frameworks like ExpProof from Kudelski Security pave the way for fairness verification, essential for equitable credit models. arXiv protocols for decision trees offer scalable alternatives to neural nets, ideal for interpretable investing strategies.

Looking ahead, the $10-15B market projection by 2025 signals maturation, but investors must temper enthusiasm with proof-of-concept pilots. zkML. ai resources, including tutorials and forums, equip practitioners to experiment safely. As a CFA charterholder, I view zkML not as a panacea, but as a prudent evolution in AI governance, securing alpha in privacy-first portfolios.

By embedding verifiable inference into core workflows, Inference Labs empowers a future where AI drives decisions with unassailable integrity. This conservative pivot toward zkML verifiable inference promises resilience across cycles, rewarding those who invest in verifiable truth over unverifiable promise.

No comments yet. Be the first to share your thoughts!